|

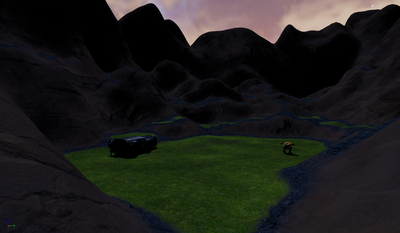

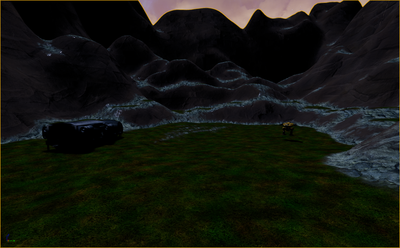

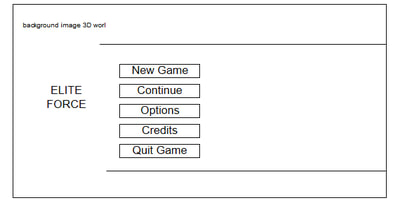

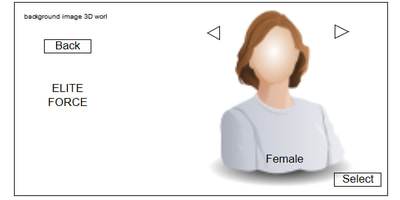

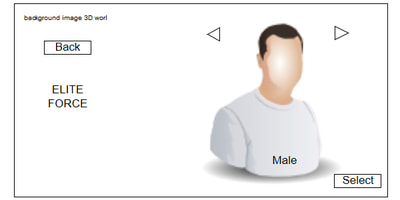

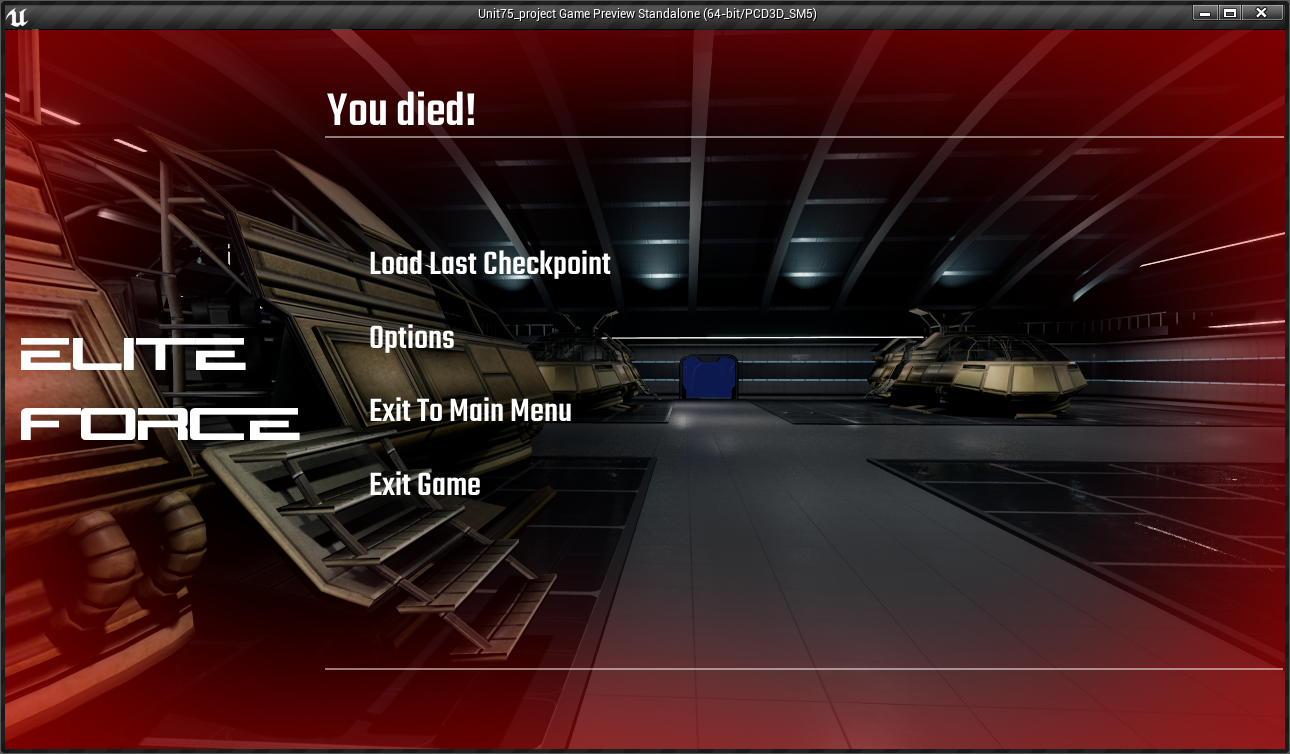

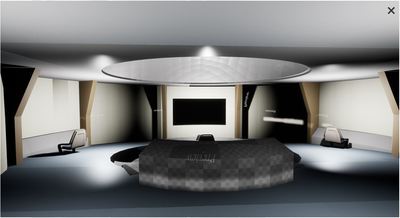

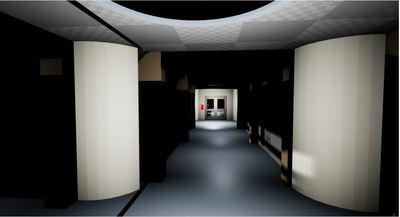

Once I finished my project, I wanted to make a small demo video it shows all the areas that are covered in my project. The only thing I did not get to put into my demo was the character implementation as my project demo was made on 4.16 and the character blueprints was made on 4.19 Below is a video of my game play though Below is a presentation that looks over the whole project that goes into depth about each section created for the project. Evaluation

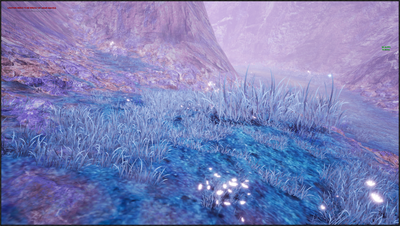

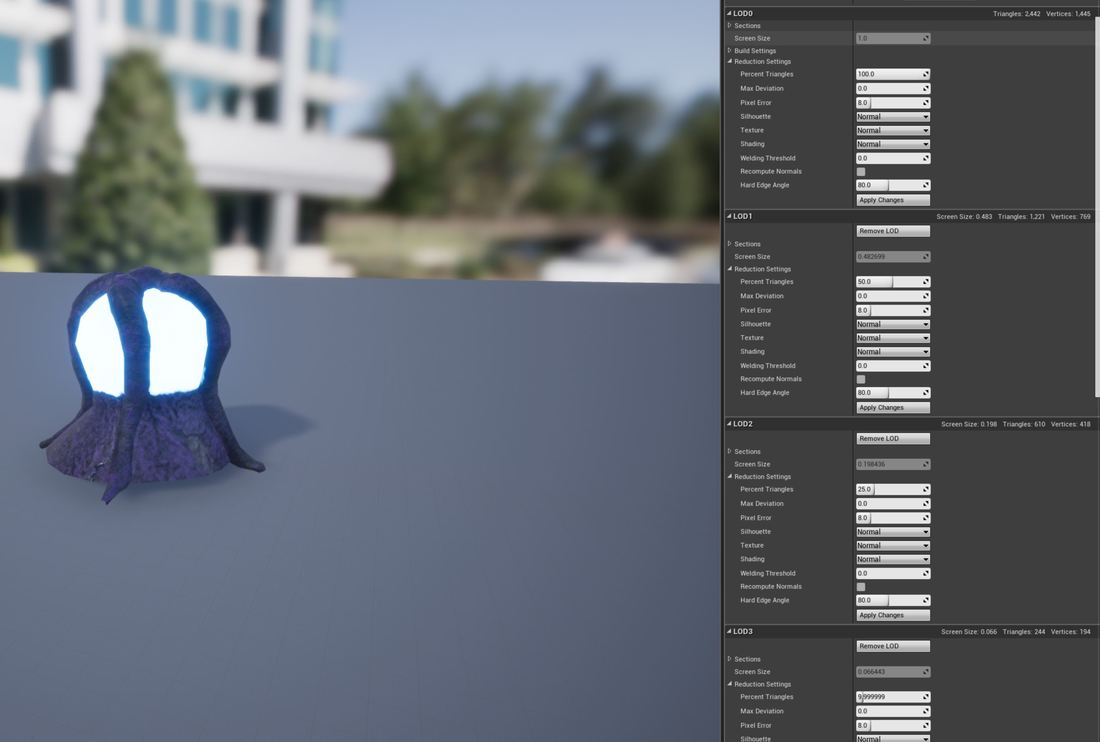

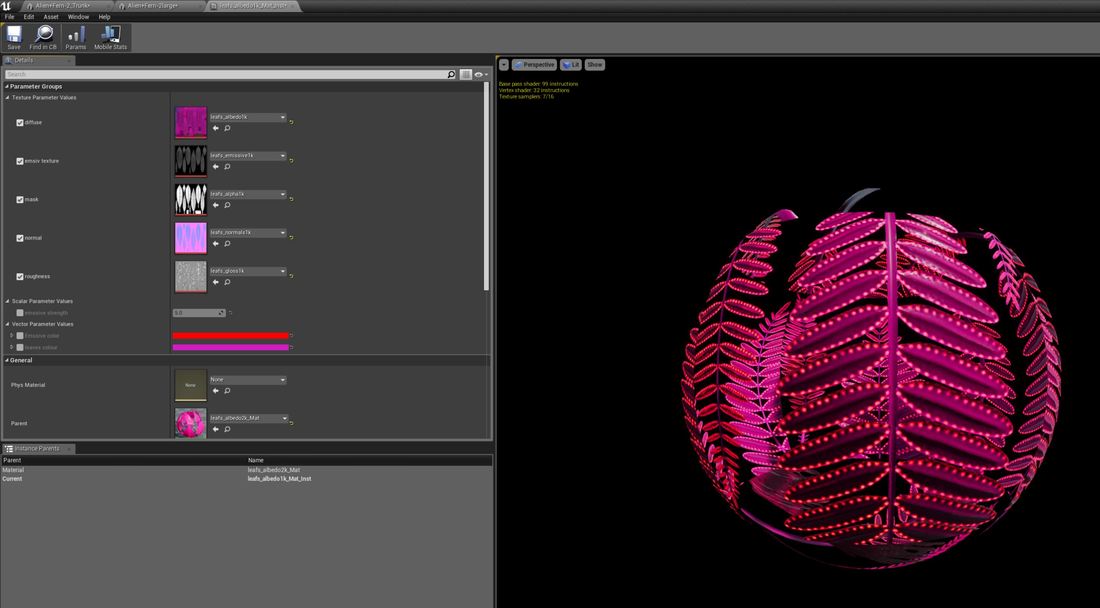

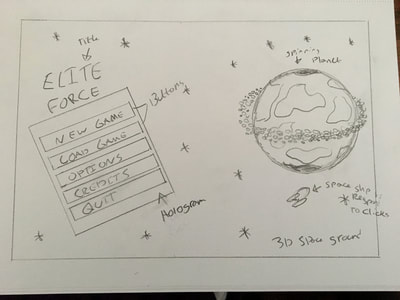

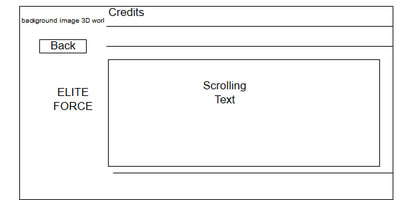

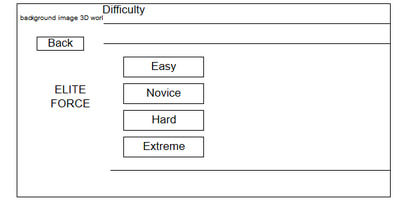

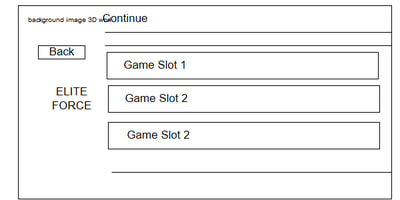

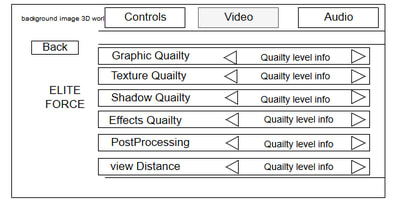

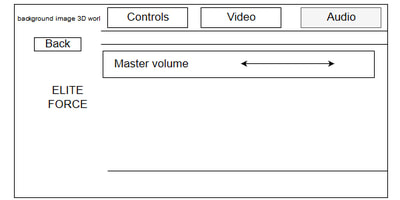

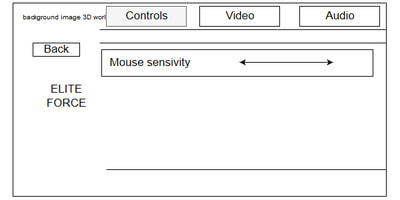

Overall I’m proud of what I have created for the project, I felt like I kept to my game design document and that I didn’t go too far away from what I planned in this document. The only thing I feel that didn’t stay to the game design document was that character attacking and defending using melee weapons and in the game design document I mentioned ranged weapons. Nerveless I am extremely pleased with the project, I feel visually it has matched my concept art and feels like a sci-fi game. The project schedule felt help me create the project and keep the development tasks to a deadline, 80% of the time I kept to the deadlines that I set for the development task and only missed a few but these were justified and usually down to error and lack of research. I felt that the level design, 3D environments, lighting and interfaces went extremely well, these are the areas I enjoyed. I mainly enjoyed the blueprinting side of the area as well as world building once I had all the models built and enjoyed making the levels. I felt that the animations and character implementation didn’t go so well, the animations I didn’t enjoy that much and felt all the character animations were too rigged and didn’t look fluid enough, the only thing l liked about the animations was the animated assets I found these worked with my project and made it pop. I enjoyed the character implantation on the blueprint side but I feel what I didn’t match the game design project. I did learn a lot about blueprints in this part of the project. I think if I was going to choose a job based of this project that I would apply for in the industry it would be either level design, world building and game design. To conclude I am extremely pleased with what I have accomplished with this project, I have learned so much about making games and what it involves and all the areas and the works each area has. It has made me understand what I want to do in the game industry from this project.

0 Comments

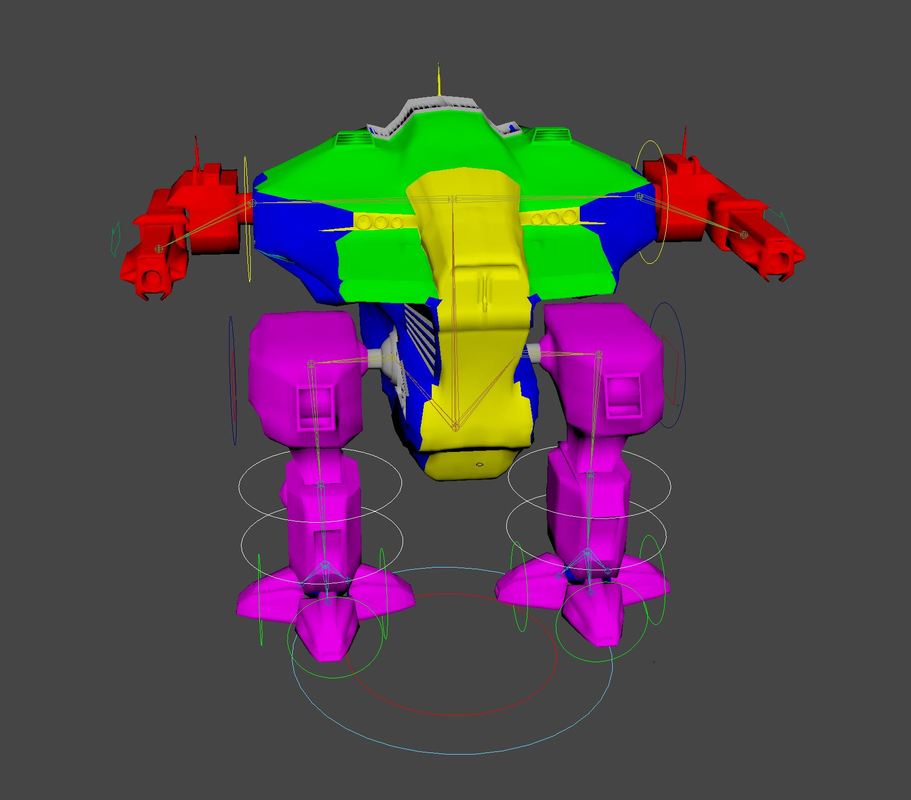

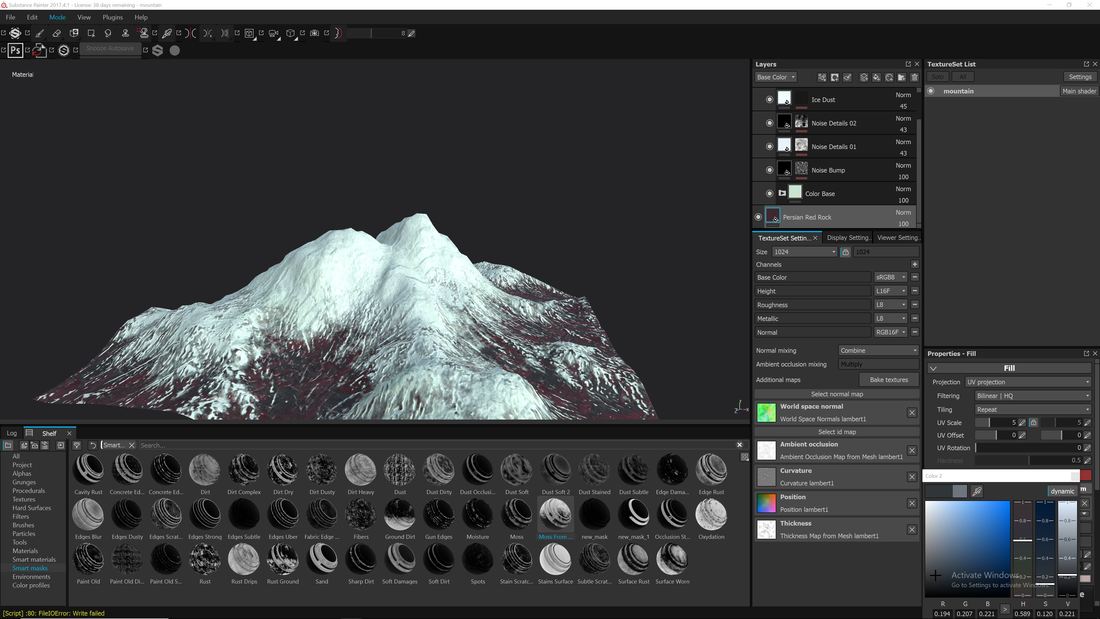

In this blog post I will look at other projects that I have done during this academic year and show how I plan to advertise myself with a portfolio and looking for companies and jobs in the surrounding areas. Game Jams Since the beginning of the year I wanted to try a game jam, I wanted to try that task of producing a small game within a short duration for a few reasons; working in a team; to see how far I could push myself; to be outside my comfort zone; to learn and work under pressure as well as having time constraints. These are a few reasons that I felt that could benefit me as a person as I feel these are topics that I would encounter once I get a job in the industry. The industry is a fast pace, constantly changing and always need to learn and adapt. The first game jam I entered was Unreal Engines Winter game Jam. I teamed up with a fellow student Lee Stockton as well as support for one of the tutors Pete Gibson-Black. The theme was “on thin ice”. Me and Lee brained stormed and decided to do some that neither of have done before and base if on a class arcade shooter style. We went for a top down shooter, we decided to create three small levels with a small story of a guy getting stuck in a snow storm to find that he was trapped with possessed snowman by an ice king. We had basic mechanics inside where the player could shoot at the enemies. We also had picks up for health, armour and ammo and had traps like ice that made the player loose movement controls. We broke down the tasks and we took control of areas of the project. Lee did all the mechanics for the top down getting it all set up, he also did the models and animations. I felt he did an amazing job of modelling the snowmen and animating them, even thou Lee doesn’t enjoy animation that much he did a great job on it. We had Pete create the background artwork for the UI, he did an amazing job for this and this help us out massively and fits really well with the game. I did the level designs, some of the smaller game mechanics like pickup, the post processing and the texturing as at the time Lee did not have substance painter while I had access to an indie licence for this. Conclusion This was a massive learning curve for myself having 3 days to produce the game was a mammoth task, we planned on a simple game but got carried away with the levels designs and started to produce to much work in a small time. We decided as a team to cut a lot out as we would of ran out of time and keep it simple to the idea that we original had. This was the idea of doing game jams we all about learning and knowing when to stop making something or asking the question is it needed? Also not having a form of source control had made transfer files harder sending the project back and too for both of us to work on it. Overall, I enjoyed the game jam, I learned a lot, I enjoyed working as a team with lee as we both came up with great ideas and felt the end was fun to play with. It had a few issues but that was expected within the time limit we had. We didn’t win anything for this game jam but that didn’t mean much to me as it was something I enjoyed doing and found it extremely fun. Below is a video of the game we made for the winter jam. For the second game jam we entered into Unreal Engine spring jam, this jam had a longer time frame than the winter jam which gave us a little bit more breathing space. The theme for this game jam was Transition. I teamed up with Lee again and another lad from out course Jamie Dubuisson. For this game jam I decided to take the role as project lead as I came up with an idea for the theme. For the transition theme we first came up with the idea to have 3 different eras that start of in the 1950 that transition over 1970 and 1990 then when we started to write the list of stuff we needed what we came up with would have taken a lot longer over the time period with three people. we then decided that if we stay to the 1950’s theme and have a transition between two characters that need to complete a number of puzzles to meet up and complete the level while telling a story like the player is taking controls of actors in a film. This gave us the idea of having a TV set as the screen and having the environment as a movie set. I set the tasks for the members, I looked at the time constraints, availability and the members strengths. For Jamie I asked him to take control of designing the robot that would be one of the playable characters and designing a couple of the models within the levels as well. The effort and time that Jamie put into his models where outstanding, I only sent him a few image references of what I was looking for and he went above and beyond trying to make the models. For Lee I asked him to take control of doing the large-scale models for the levels, create the non-playable charters and other smaller jobs in the project. The model’s lee created for me where high standard and did a marvellous job on everything he created the project. Giving lee access to the project via source control was also a huge beneficial as lee didn’t have to keep sending me the files and he could upload the models and set them up for the scene. My role in the project other than project lead was the game design and level design. I set up the basic mechanics for the game that the player would interact keeping it simple. The level design as well was simple, it was over two levels the first level was the menu which was just a camera that pans to different points in the level to give the impression of movie credits. The second level was the full demo level and all the blueprinting was mainly done in the level blueprints to make it quick using event dispatchers and trigger boxes. Conclusion I found this game jam more enjoyable that the first, it was a nice theme to work with and we had more time so it felt like there was more room to breathe and we didn’t swap our self serves with to much workload. The use of having source control also helped speed up the design process as we weren’t letting one person do all the work, so this saved time as they could import the work into project and set it up to be used. But the lack of spell checking I feel hampered the overall effect of the game but this is something we have learned for the next game jam. The work done by the team was outstanding and knowing that all three of us was working during the game jam as well made this jam feel like more of achievement and I am extremely proud of what we have accomplished for this game jam. As this was a recent game jam we still don’t know how well we did in the game jam but regardless of the results I am still proud of the team and the work we put in. Below is a video of the game for the spring jam. Self-advertising In this section I will look at how I could advertise myself to find a career in the game industry and look at where I can send my work to local games companies. In the games industry a CV can only get you so far without been asked to show previous work and a form of a portfolio. There are many websites like art station where you can upload your own images but due to the high demand on these websites a less popular artist might have the images drowned out. I first decided to create my own portfolio website that would show visual references of my best artwork for the areas I am currently interested in trying to find a position. I enjoy world building areas so something like Level and game design would suit me best. These are the areas I mainly targeted on my portfolio and getting a selection from the projects that I created and showing my work in slideshow galleries. I also showed model as I kept my option open for environment artist encase I was offered a job in that area, this showed unique models that I have created over the two years and as well as modular building. Below is a link to my portfolio https://alanhortonportfolio.weebly.com/ I then looked into social media, this can be used a powerful tool to advertise yourself and your work I looked into two popular platforms: Instagram and LinkedIn. Instagram will be used for a running live portfolio of my work posting pictures and videos of my work when it reaches a suitable standard and targeting certain areas using hashtags to advertise my posts in that area. LinkedIn would be used more a professional route, where this would be a live CV that would advertise my skills and send my profile to companies that have jobs advertised.

I set up my LinkedIn talking about my skill sets, employment history, projects and interests and set it up so that recruiters can look at my profile. https://www.linkedin.com/in/alan-horton-5120a7141/ I also found a few websites that I can upload a portfolio and browse jobs https://jobs.gamesindustry.biz/ https://jobs.mcvuk.com/ https://datascope.co.uk/ https://aswift.com/video-games-jobs/ Below am I going to keep track of the key developments that I plan to do for each unit and miscellaneous tasks that I need to do to complete my game demo before June 2018. I will set deadlines for each core development to be completed and highlight these if I was able to complete on time. Have in a schedule will have me work to deadlines and keep myself on top of my work flow to create a better end result for my game demo. Unit 76 Development schedual

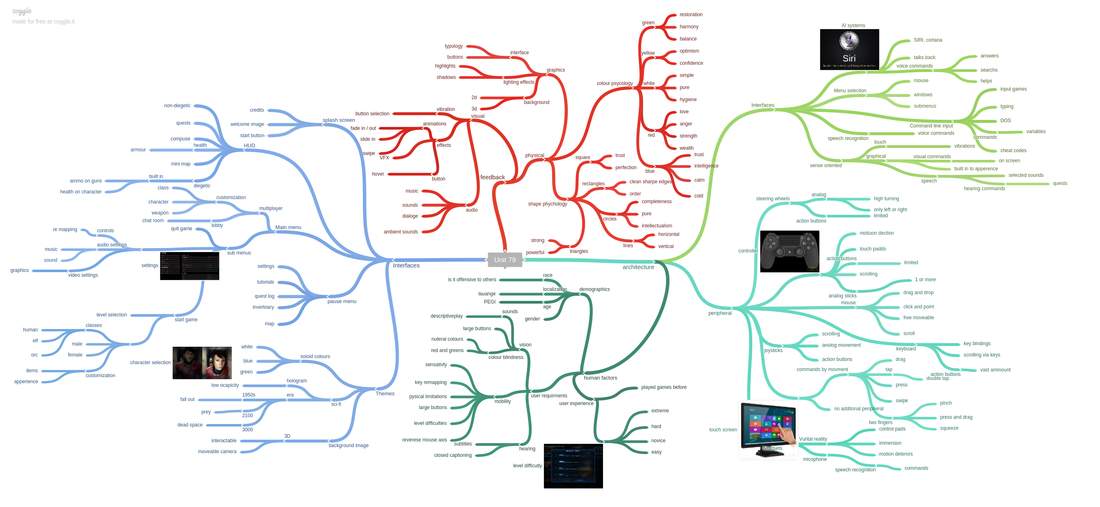

Unit 79 Development schedual Notes* the items in blue underline are one day tasks

Unit 74 - Animation

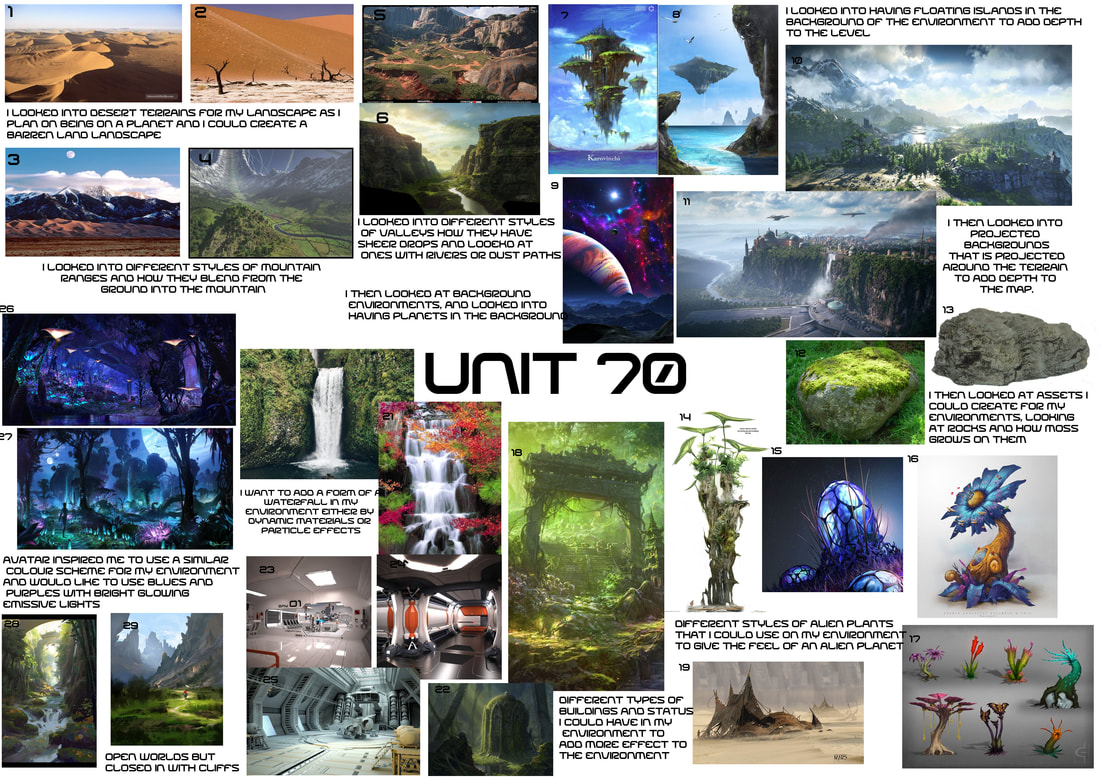

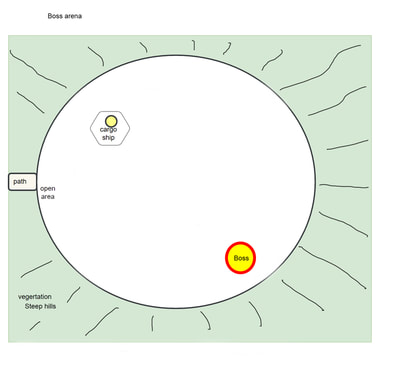

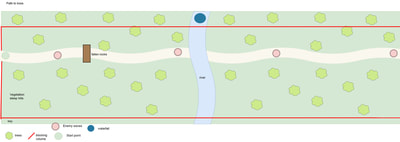

Unit 70 - Environments

Unit 52 - character implementation

Unit 72 - Lighting and rendering

Miscellaneous Tasks

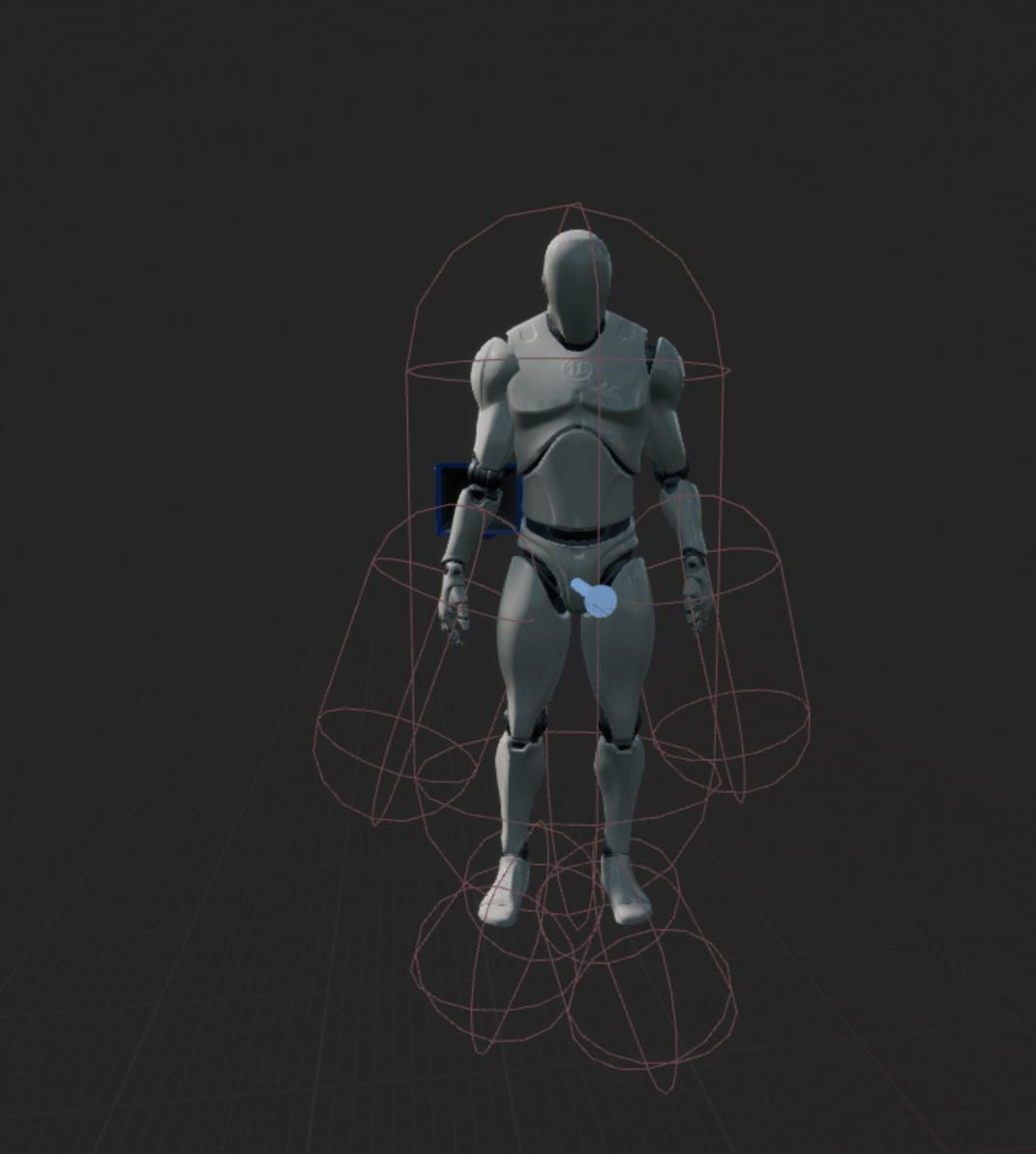

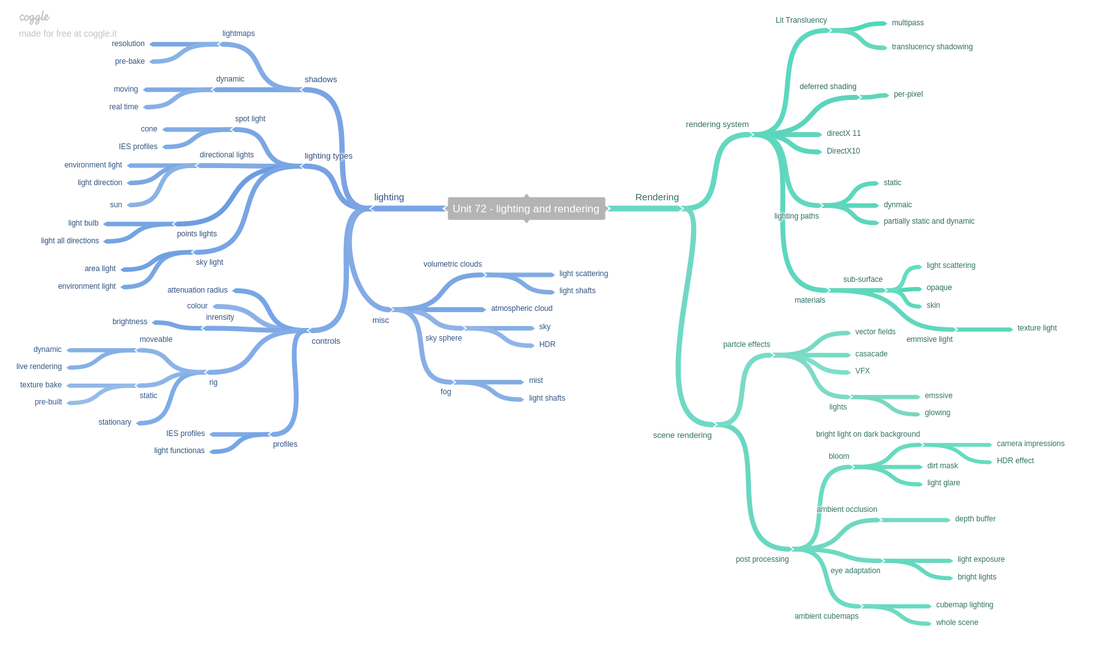

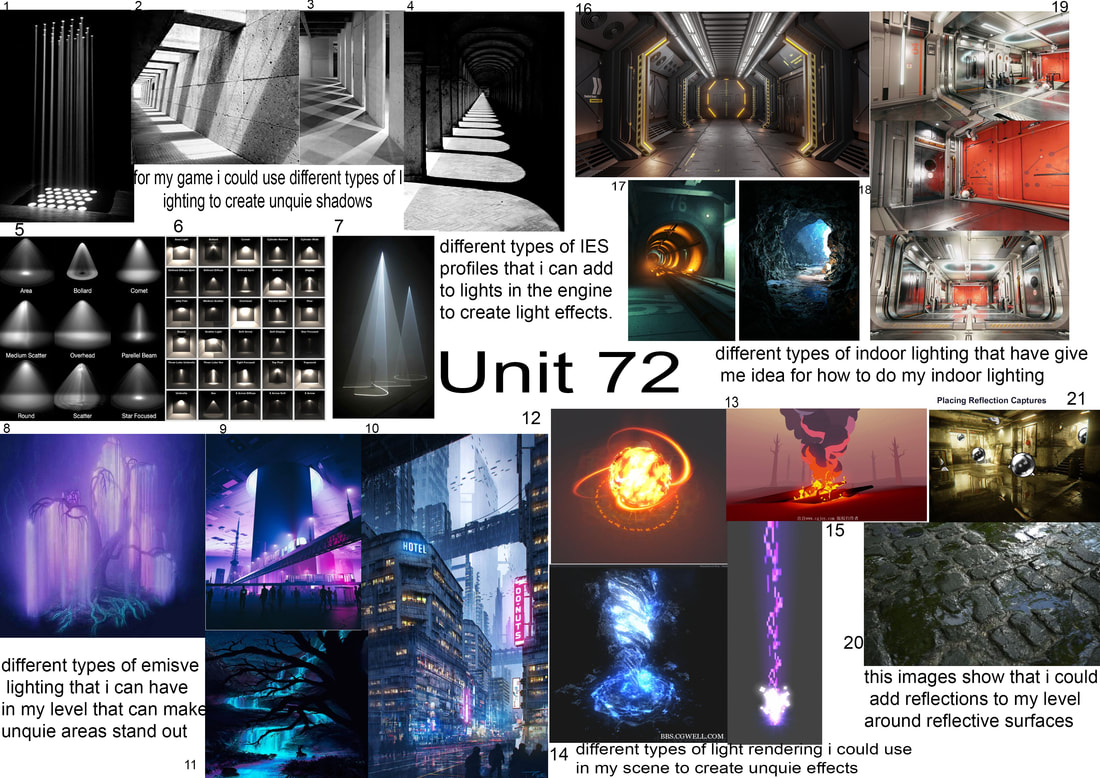

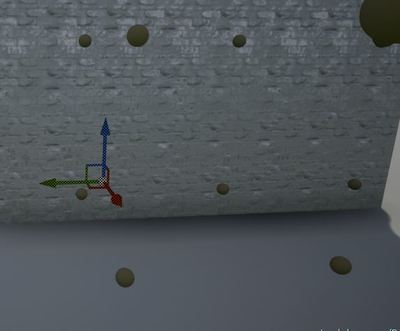

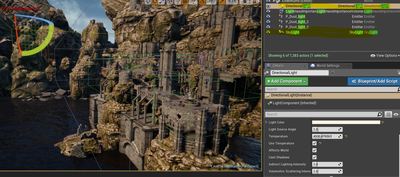

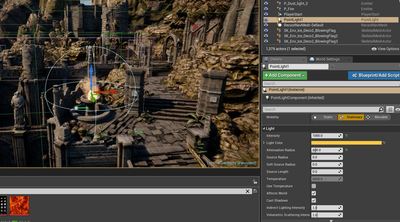

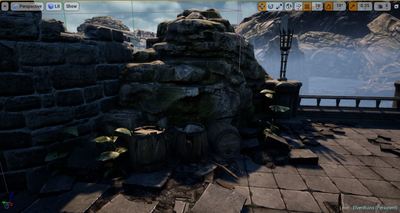

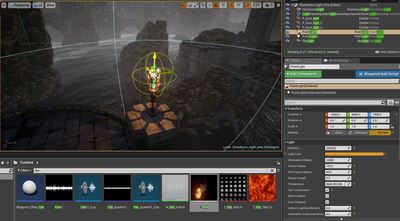

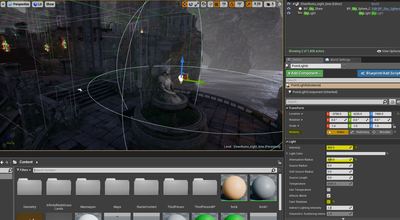

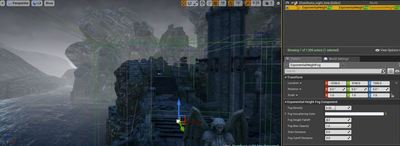

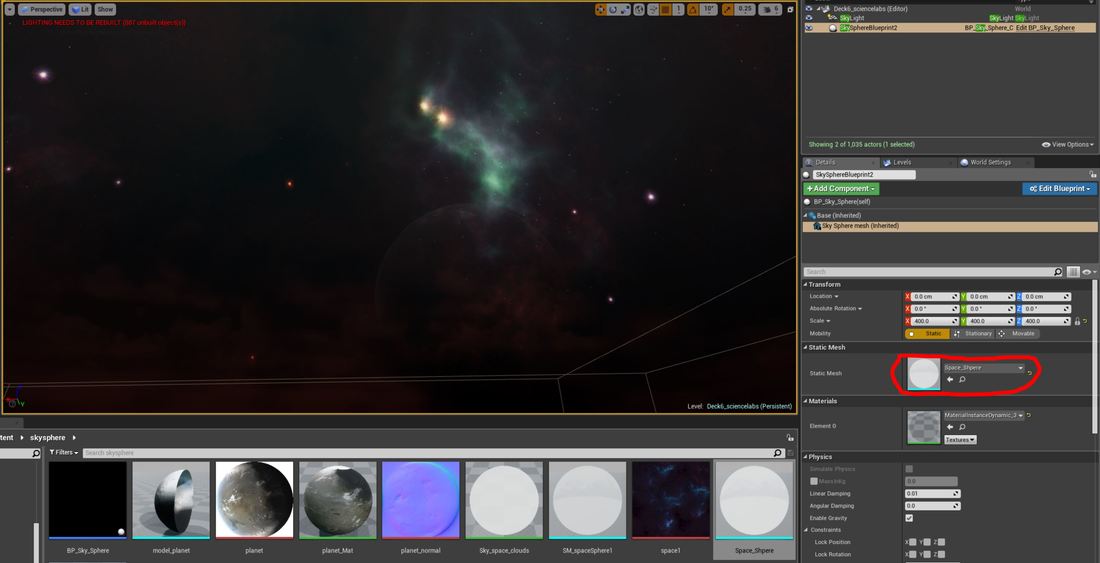

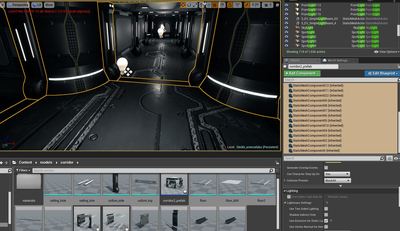

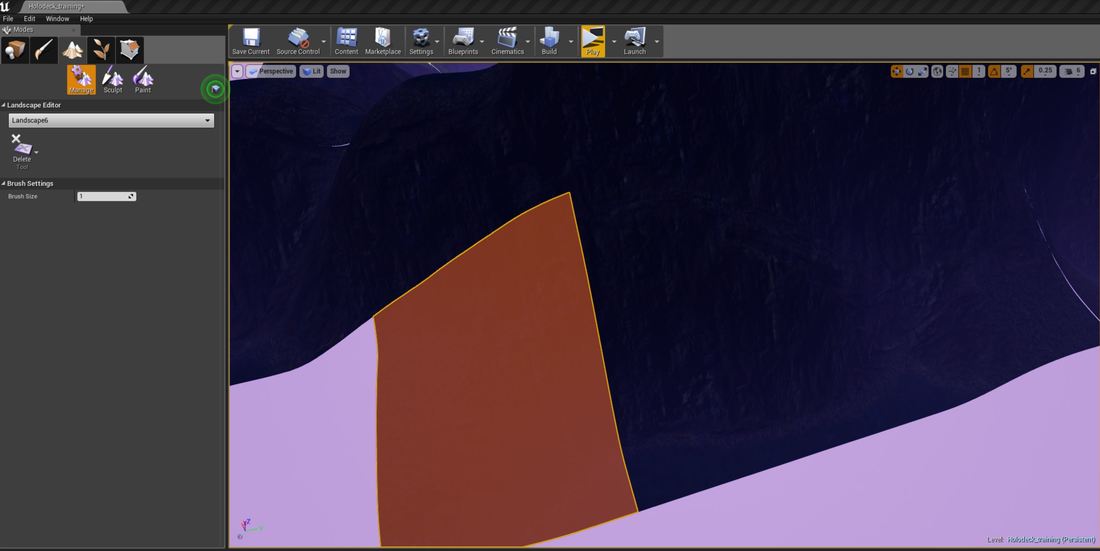

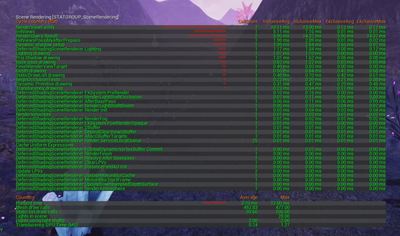

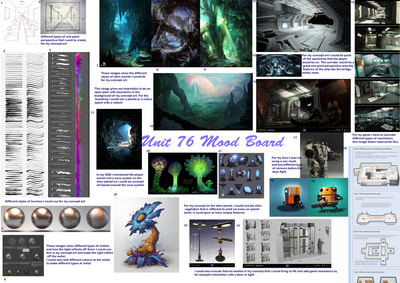

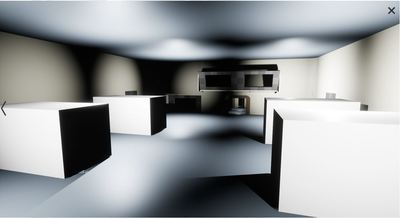

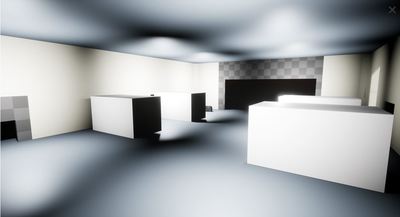

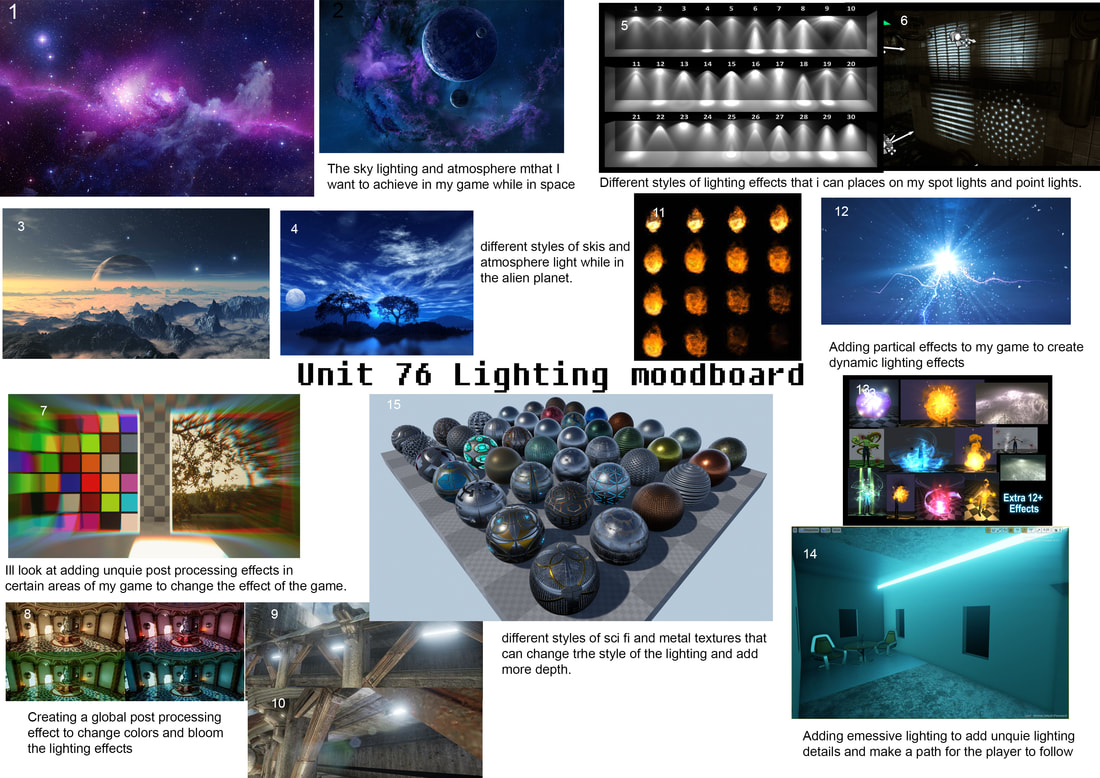

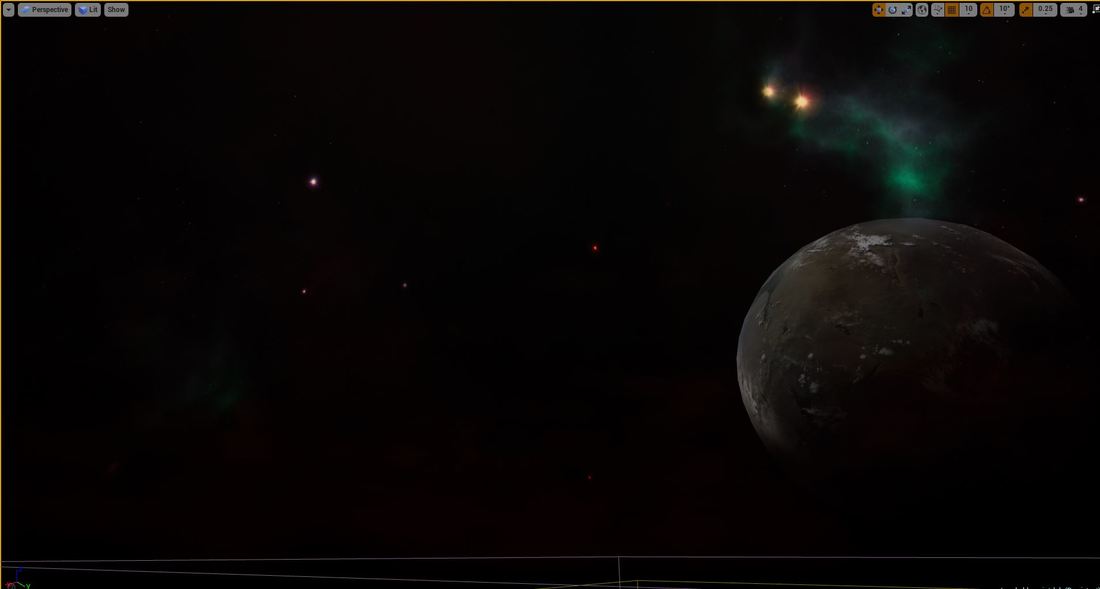

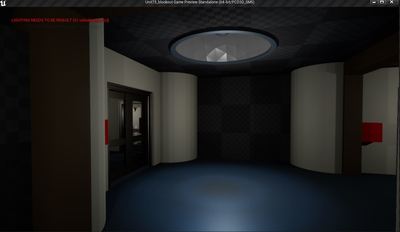

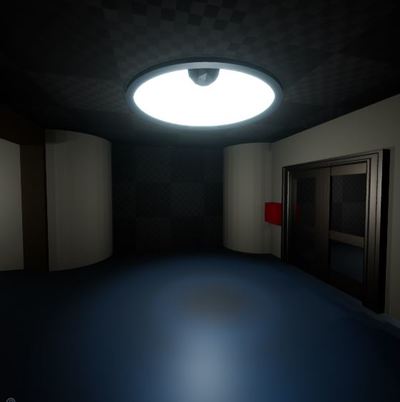

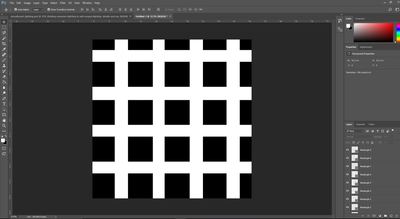

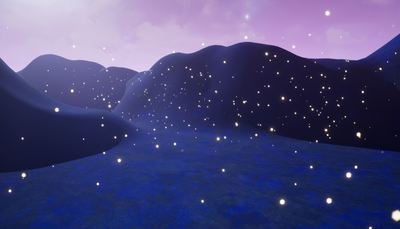

Task Timeline Pre-production Start date: 1/4/18 End date:7/4/18 For the pre-production I have given myself a week to create the pre-production for the lighting, this involves looking at lighting research techniques, creating mind maps and mood boards of the lighting style that I want for my game. Practice scene Start date: 7/4/18 End date: 20/4/18 For this section I want to put the research I have learned from the pre-production to practice and see how It works and how it could work in my game. I have given myself just over a week to compete this section. Lighting scenes in my game Start date: 21/4/18 End date: 5/5/18 For this task I have given myself two weeks to light various scenes in my game, I want to look to lighting an indoor scene and two types of out door scenes; night and daytime. This is where I will put all the research and pre-production to work. Live render Start date: 6/5/18 End date: 7/5/18 For this task I have given myself a day to create a live render (video) of one of my scenes I aim for the video to be 30 seconds long and shouldn’t take any longer that a day to produce this. Pre-production I started off this part of the project but researching into how lighting works in Unreal Engine and how it effects the performance, I looked at different types of static and dynamic lighting and rendering. After doing my research I look into what lighting and rendering effects that I could use inside of my game environment, from this I will then create a mood board. Below is my final mood board Practice scenes In this task I will practice and test out the lighting and rendering techniques I found during my research, I will create a basic project in unreal engine so that I can practice the lighting and from this I will understand how to use lighting in my own project. I started of by learning how to make the whole environment black, where nothing cast any light, I started of by turning the intensity to 0 on the skylight and directional light and then I went to the sky cube and turned all the sky colours to black and unticked determine sky by colours. After changing these settings, the scene looked like this: I started off by adding a sky light, this light all the area up, it added a basic light to the environment not casting must shadows and was still dark. I then added a directional light with acts as the sun and casts shadows from the direction it is point from. I added it with the skylight and had it facing the door of the house so that the light would cast a shadow behind the house. wanted to try adding the last two types of lights a point light which as a light bulb and casts light in a uniform way and a spot light that cast light in a cone and emits light from the top of the cone. For my scene I added these lights, inside the house I had two spot lights point to the ceiling like a wall light and then added a hanging light model with a point light, I then added a particle effect of a fire outside with a point light, the fire uses live rendering and cast dynamic light, I added the point light to add more light from this fire. For these lights I looked into changing the attenuation radius, intensity, source length, inner and outer cone and colour After adding the lights to the level I wanted to play around with the mobility settings, I decided to change the spot lights that are on the wall to static as these wont be producing dynamic shadows, the fire outside light will use stationary mobility and the hanging light will use moveable so the player can move this light and cast dynamic shadows. I then wanted to test creating auto exposure going into a the room that is dark and giving the effect that human eyes would have when going from bright to dark and vice-versa. I did this by adding a post process volume into the house and went down to the lens tab and selected auto exposure and adjusted the settings to make it look like it slow adjusting to the new light brightness.

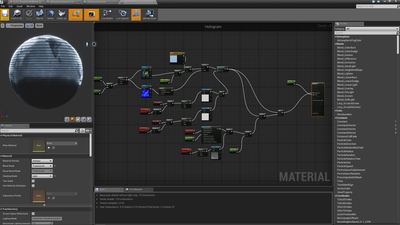

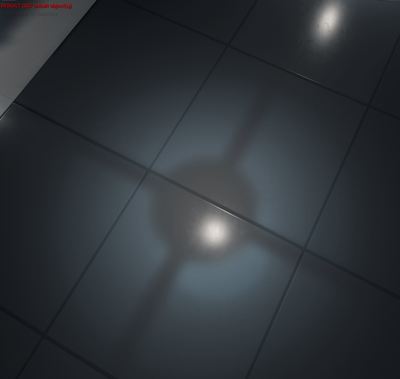

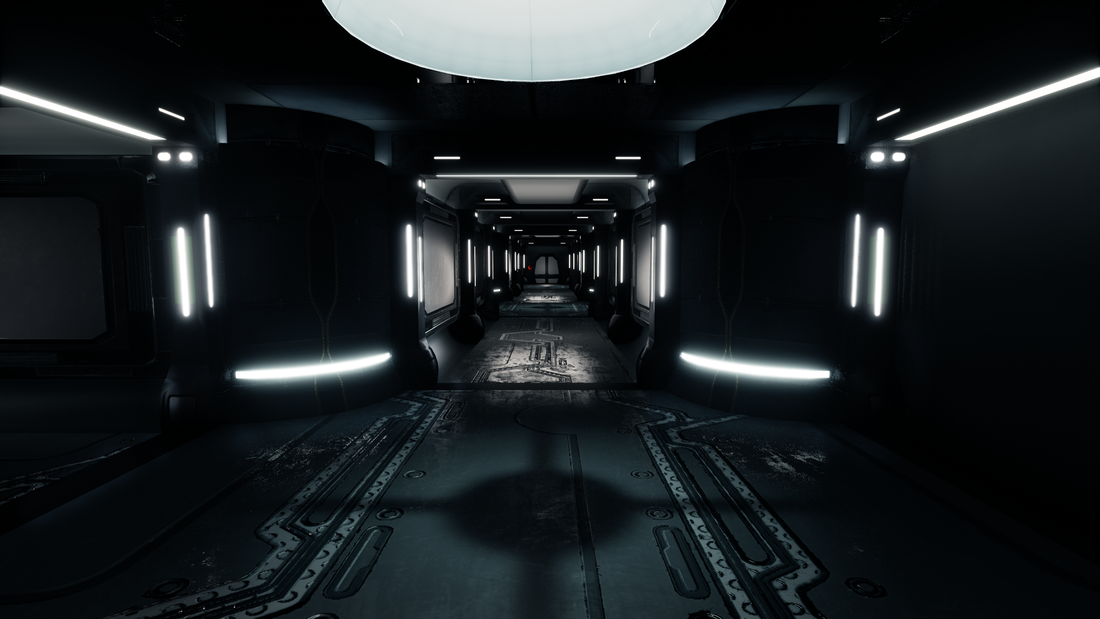

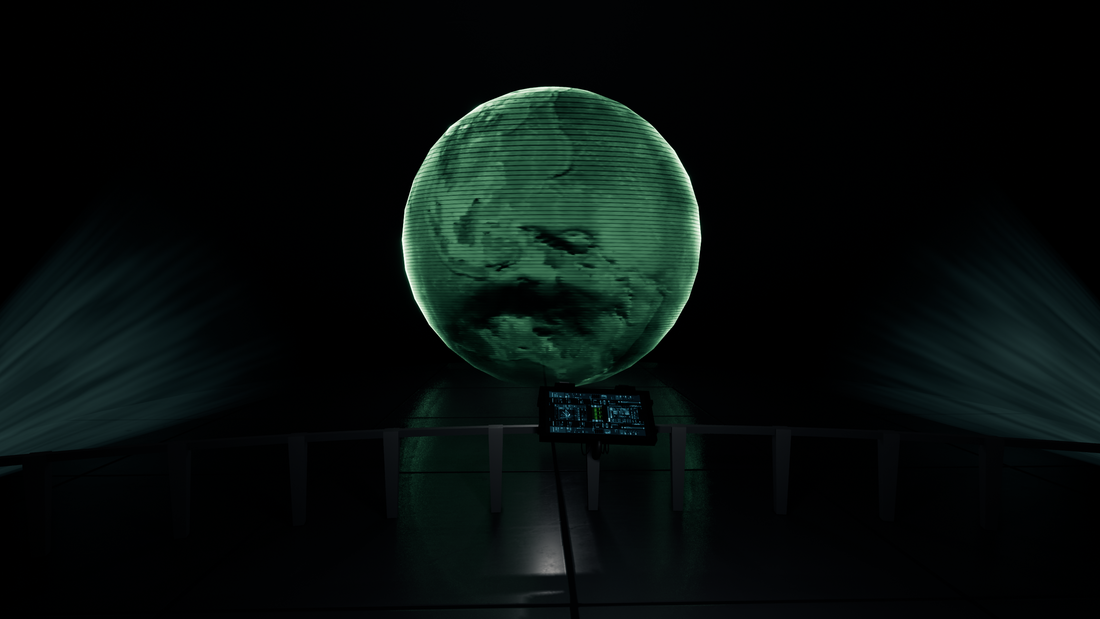

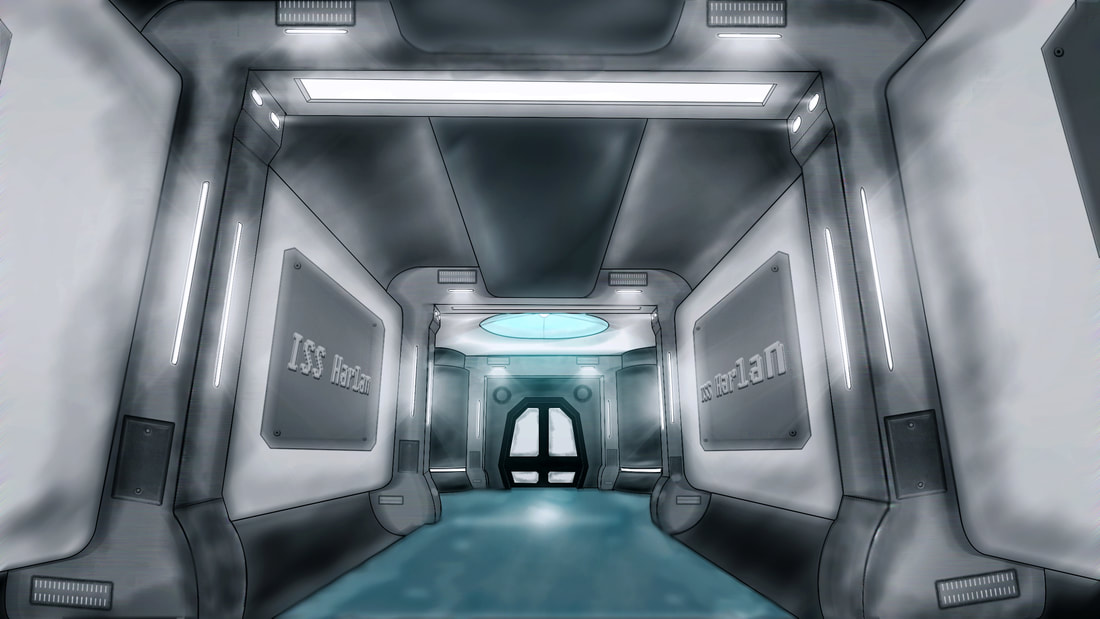

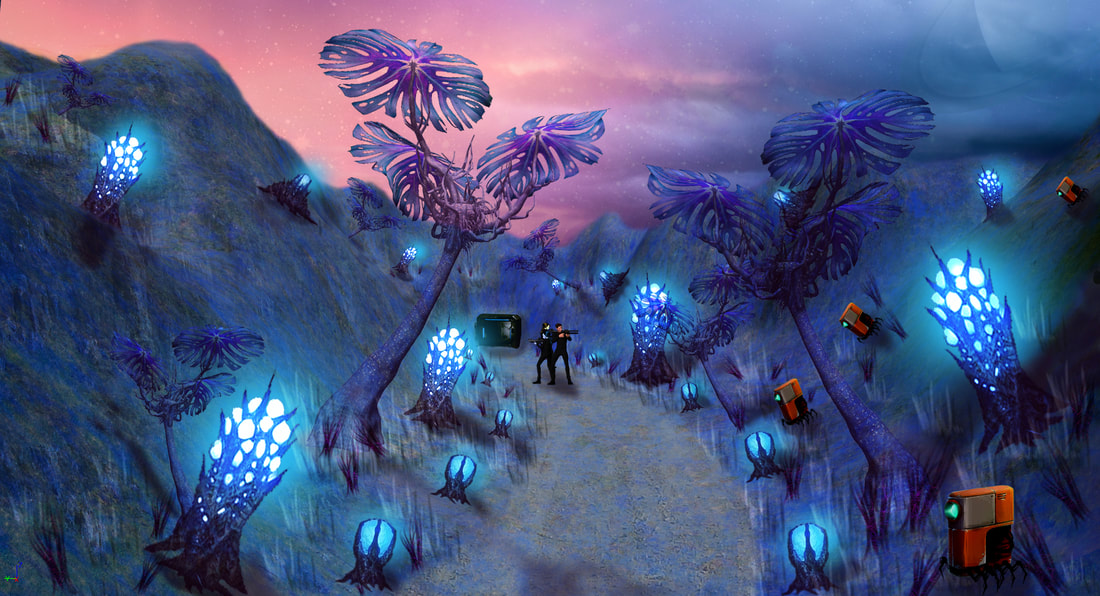

I wanted to look into lightmap resolution, lightmaps are a vital part for shadows, floors, walls and ceilings should have a higher lightmap resolution was it not the model that creates the shadows on the lightmap it is the lightmap that a shadow is been cast onto e.g. the floor, thus this needs to be a higher quality. I then placed the floor into the level and a pillar on top of the floor and angled the directional light to create a shadow. I started off at a resolution of 64, I then did resolution tests of 128 , 512 and 2048. Lightmass important volume I added an lightmass important volume around my house as I want the lighting to focus the lighting on this area and create better lighting, this mass computes indirect lighting during the build and can render this lighting data to produce dynamic objects. I then placed a visitation of the volumetric lighting, this shows little spheres as points which is an lighting sample this sphere shows the lighting direction and shadows, this is what creates the dynamics shadows. Below you can see my lighting data points the sphere are light where it shows the direction of light and on the other side of the sphere are dark showing the shadows, over the distance these sphere lightness fades which shows there is no more light source hitting this sphere and the data will know this is a shadowed area. Day scene For this task I decided use a pre-built scene and light the scene using the methods I used above and new methods to enhance the lighting. I started off by adding the unreal engine grass land pack to my project and deleting all the lights, post process and reflections. After deleting this I then added a sky light which added a basic light around the level, I set the intensity to what I felt was right for the computer, in my game I will add a gamma check so that the user can change the sky light intensity, this will make the lighting correct on the user computer. I then added a directional light and played around with the colours, temperature, direction and intensity till I found the effect I was going after. I then combined this to the skycube to update how the sky would look as this uses a reference for the sun. I then added a point light where a fire was this is to add more lighting to the particle effect, I changed the intensity, colour, attenuation radius to the light. I then wanted to add a low laying fog to my level as it is water based and fog would be seen, I started off by adding an exponential height fog. I then played around with the settings changing the colour, density, the height falls off; this is what makes the fog look low laying. The opacity for how dense the fog is and the start distance which starts the fog a certain for the player character. I then added reflection spheres to my level, this reflects the light from around the map, this is great when the level has materials with specular reflection (mirror light) or materials that have matel properties that reflect light. I placed the cube in front of metal gates that I have in the level so that I can get more light to bounce and reflect of this gate. I changed the size of the radius as I only wanted the reflections to be focused on this gate. Shadow Frustums These show the direction that the shadows are been cast to moveable objects, these shadow frustums show dynamic shadows it uses a colour box of red, orange and green, depending on distance of the attuention radius. I can get a visual representative of how the shadows look by going into the advanced options and enabling the shadow frustums. I then adjust my light to cast the dynamic shadows in the direction I want it to. Distance Field Ambient Occlusion As I am planning on having a moving sky light in my level, for a day and night cycle I needed to look into adding a distance field ambient occlusion. A skylight uses a signed distance filed volume precomputed around each mesh to generate an ambient occlusion. This supports dynamics scene changes which will affect the ambient occlusion in the scene. This takes the shadows of the meshes and makes a darker shadow over the mesh making it look more realistic and can be changed at real time. Task 1 – Night time scene Continuing on from the last task I wanted to turn this scene into a night time scene so that I can practice lighting up a scene at night. The night scene will pose some challenges during the creation, I have to think about lighting more and where it will come from as there is little light at night, how to highlight key areas without bleaching the map with light. I started off by changing the exceptional fog, I did this by changing the colour and fall off height and density, I did this to make a more black and white back drop and add white fog into the distance as the white fog will bounce the light better. After this I changed the directional light, I changed the intensity and lowered sun brightness as I wanted a night time sky. I also ticked the button to change clouds by colour this will refresh the sky to change it tonight time. To get night-time I had to rotate the directional light upwards as this shifts the days sky to night sky. To add light to my scene I decided to add fire pits and fire torches into the scene as it an old Greek style ruins. I placed these on top of the pillars, added a mesh and a fire to add the effect. I then created a light, I changed the light settings changing the intensity to between 20,000 and 40,000 added a temperature to the colour and added a source radius to blur out the intensity from where the light is being emitted. I then wanted to add highlight lights to the scene as I felt the map was dark in areas and not highlight key assets, instead of using the technique used before to light all the static meshes I decided to use point light to highlight these key areas. I used a point light and unticked the cast shadows this will just cast a light onto the model and does not cast the shadows. I then changed the intensity and attenuation radius, so it didn’t light up more than needed. After adding the highlight lights to the scene I then placed reflection sphere in the level to reflect light around the map and get light bouncing off the metal and cast deeper shadows on the assets. Below is a screenshot of a final render of the scene Indoor Scene For this part of the project I want to use what I learned in task one about lighting a scene and incorporate this into my own scene. I want to make an indoor scene which will be based on the space ship and the other scene will be based on the alien planet that I created in an earlier unit. I started off by opening my scene and adding a sky light, this will add a basic light to the scene and gently light up the scene. I did not add a directional light as the scene is inside and no windows are involved. I then wanted to change how the sky cube looked I started off by duplicating the material the sky uses and then I changed it so that the stars display a picture of space and then I edit the blueprints to the material to display this material instead of the default and then I change the material on the mesh of the sky sphere to the space one. After this I played around with the colours, turned off the sun brightness, adjusted the star brightness and clouds till I found a good medium for the space material. I then wanted to add a post processing volume to enhance the scene, I already had basic lighting going though the scene when I added the post processing volume, I can then remove this lighting and adjust the lighting to fix in with the new post processing volume. I started off by adding a colour grading effect to my scene the aim was to add a blue sci-fi effect to give the impression its intelligent I started off by changing the saturation to a light blue then I changed the contrast, gramma and gain by increase or decreasing the intensity of their main values. I then wanted to add effects to the light sources when the player camera hits it has a blue or glare to them, I added a slight vignette to the post processing and then I added a standard bloom with a low fresh hold and then I added an auto exposure, then I changed the depth of field to add a blur when the player is far away it blurs the what is front of the players camera at a far distance. Then I added an ambient cube map to increase the reflections of the materials and the light that bounces of these and increased the ambient occlusion to create great shadows from materials that have these textures. I then increased the global illumination so there was a basic light and could refine more with point and spot lights. Emissive lighting For my indoor scene I want the emissive light to be the main source of light as it a sci-fi scene I felt this would be more beneficial to the scene. I started off by selecting all my assets and setting to use emissive light for static light this will help the lighting render the emissive light and cause it to create fake light. I started off by creating a basic emissive material which involved a 3-vector node multiplied with a 1 vector node that controls how bright the lighting is. I then applied this material to the assets and I build the lighting. Below are three images of different emissive light settings all increasing in strength. The last one is high and lights up the whole world. I decided to go for the medium one and light up the scene using spot and point lights. After this I wanted to create a more advanced emissive light, I wanted to create a hologram and give the impression it is been projected. I started of by using the engine light beam mesh that uses a gradient to fake god rays and light beams, I placed these onto a spot light and then I created a material that looks like single light beams I did this by using noise textures with the engine and make this flicker to give the impression that there is dust in the air I then created the hologram material which also uses emissive light, it takes an image of each and covers it with a colour that I rotate then I use simple white textures with noise to create a lines going up the hologram which flicker these combined with noise textures from the engine. After getting the emissive lighting how I wanted it for my scene I wanted to add spot lights to add more light from the ceiling lights, I changed the light colour, inner and outer cone, attention radius and added a source radius for the spot light. To add more depth to the light as I felt it just looked like a spot light I used a light material function which takes a greyscale image and cast light shadows on the floor. I decided to use light material function over IES profiles as I felt I can do more with the light material functions but the IES profiles do give the impression of what real bulbs cast but don’t show shadows like over a grate. Below are the final 3 images CGI Rendering I wanted to learn how to use live rendering and then export a movie clip of what happening in the scene I started off by adding a level sequencer into my scene, this gives me control of a camera and I can control everything that happens in my scene frame by frame in this like I would in animation. I started off by using the camera component and I piloted this camera and flew around the level and every major turning point I would set a frame and the camera would compile its route. I started off by using the camera component and I piloted this camera and flew around the level and every major turning point I would set a frame and the camera would compile its route. In the sequencer I made sure I showed moving objects, I let the camera focus on the a flag blowing in the window and a door opening this shows the dynamic shadows moving in the scene. After playing the video though I felt that it was extremely fast so I added a play rate to the sequencer and set the play rate, I set keys thought out the render and halved the speed it played at. Below is the video of the render I made in unreal engine. EvaluationI am going to critically evaluate my lighting that I created for my project, I am going to break this evaluation down into two subjects what well and what didn’t go so well for each task during the development of this unit.

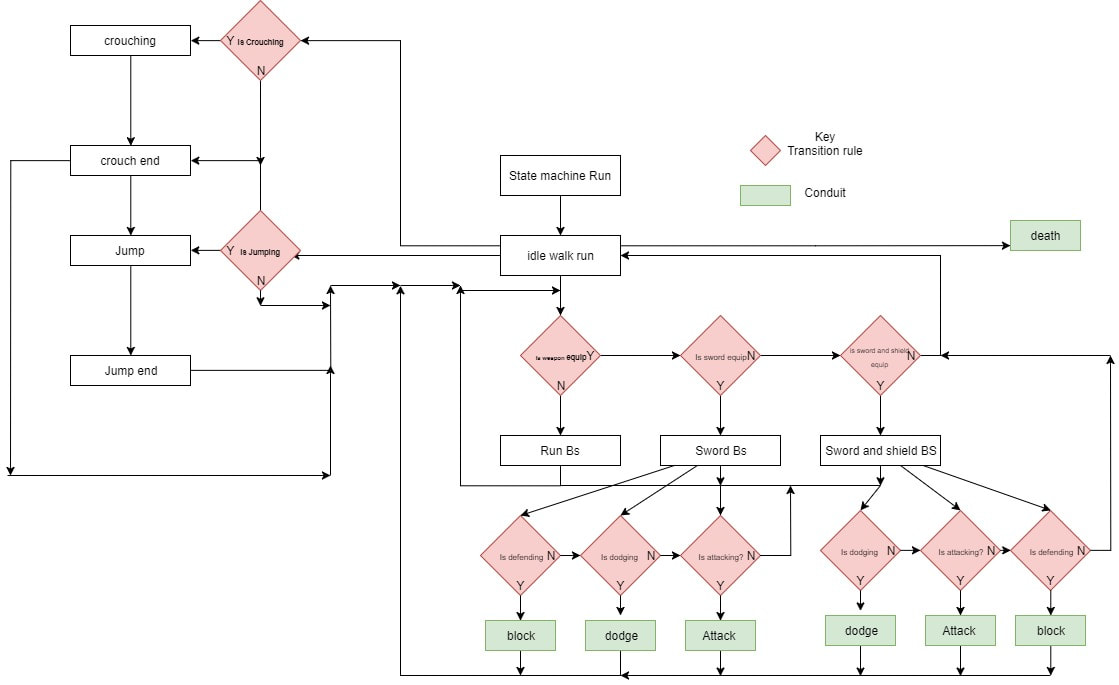

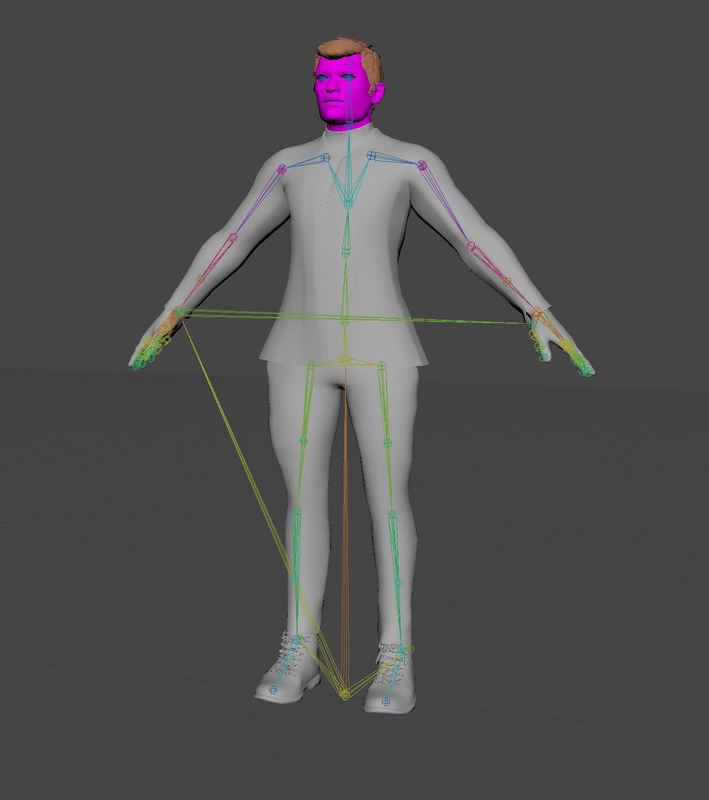

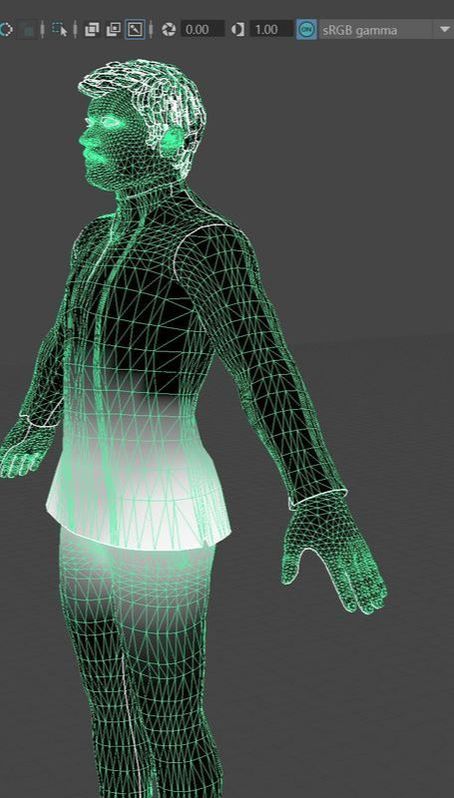

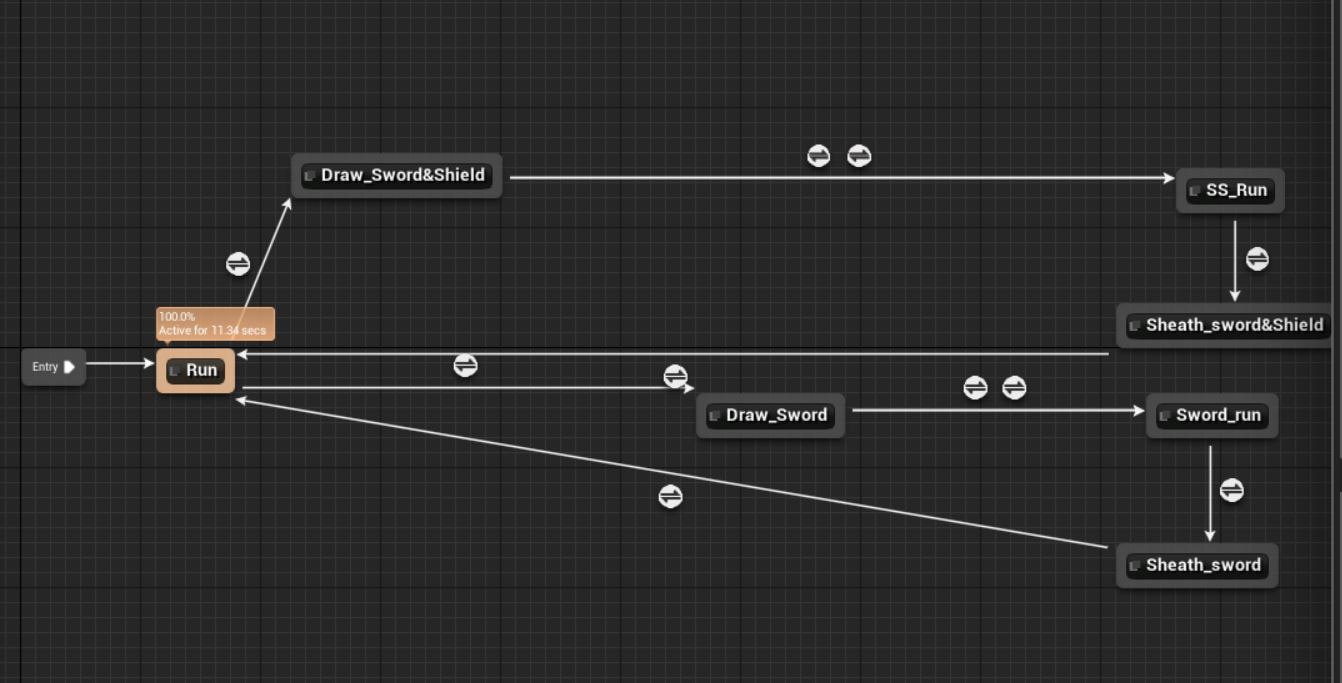

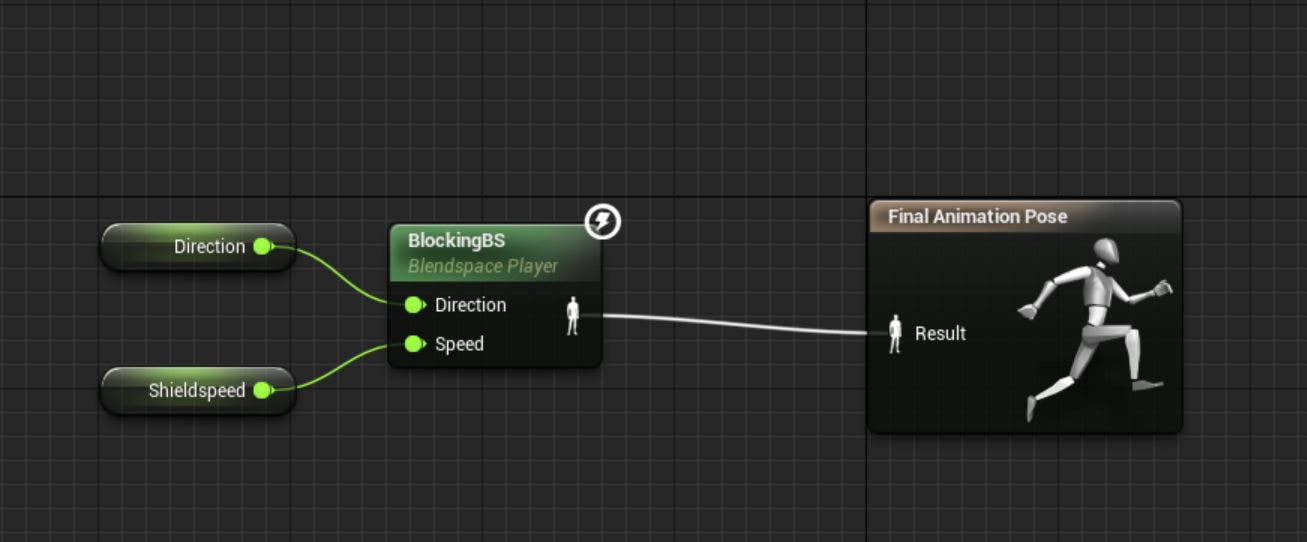

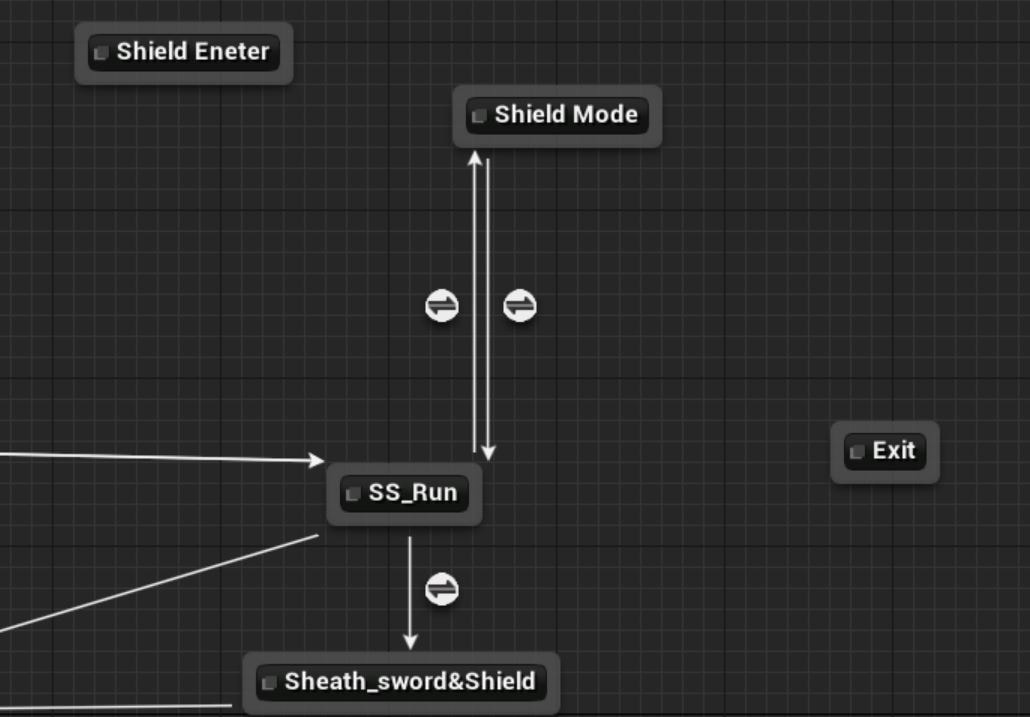

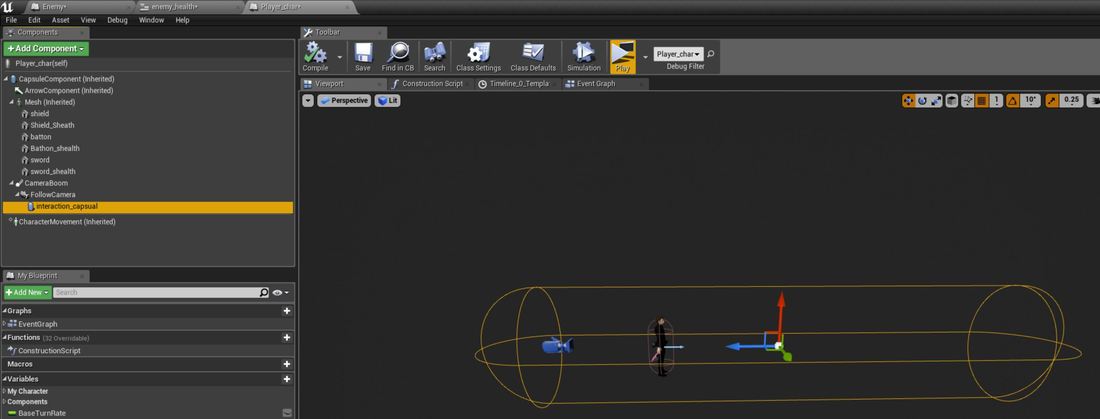

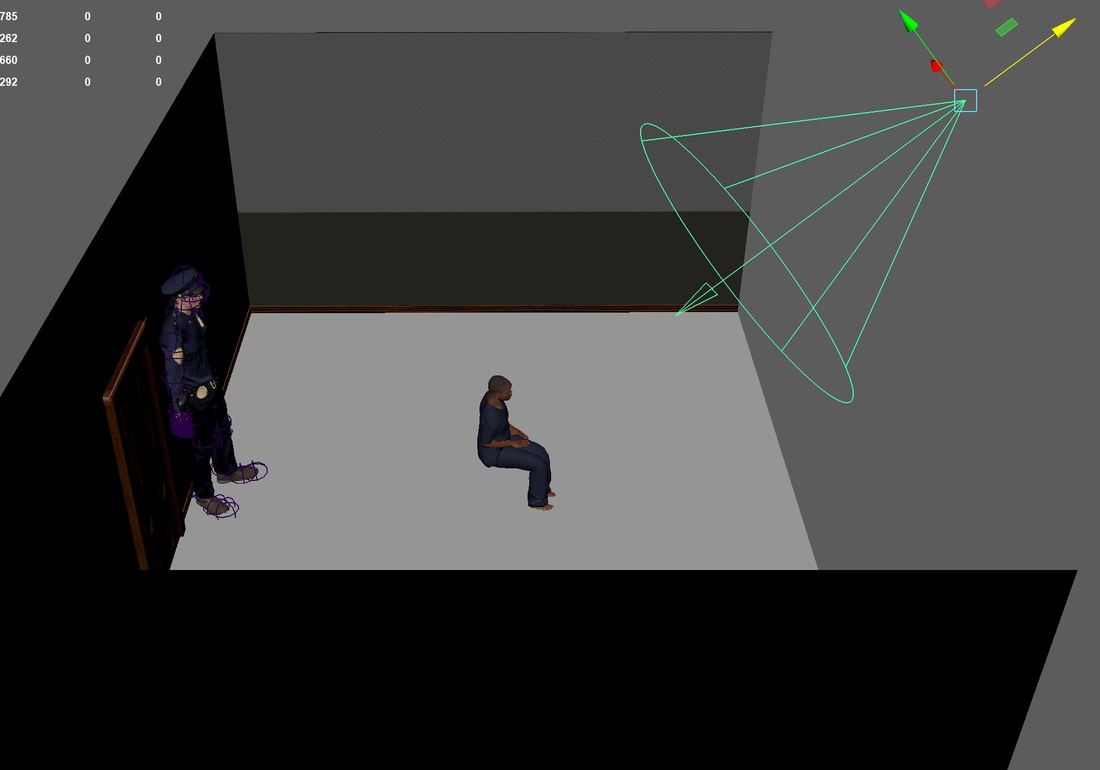

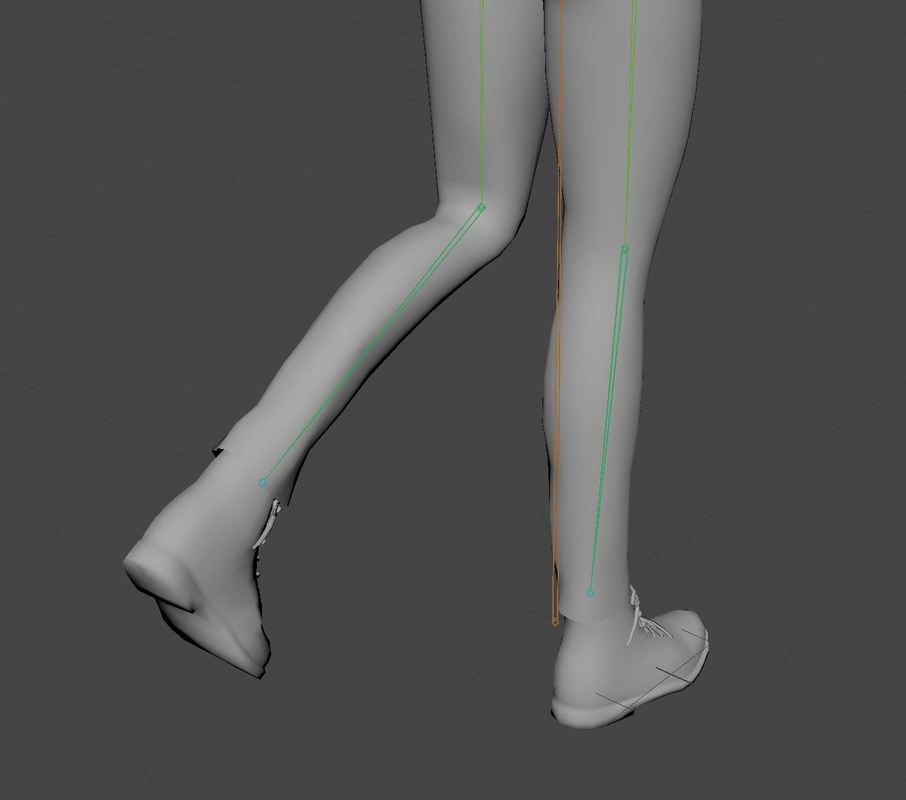

I enjoyed doing the lighting for this project a lot, I found it very interesting and I found lighting a scene correctly was very satisfying as lighting such scene can be very complex. I have learned a lot about this crucial stage in game development as this adds more depth to a game. Overall, I found the lighting went extremely well, and I have learned a lot about lighting and how to use it correctly. I had an easy time schedule for each of the areas in this part of the project as it wasn’t as big as other areas of the project. pre-production This was a straight forward task, it involved pre-production which I used to get my ideas down in a form of a mind map and I then looked into visual references of what I was looking for in my game and from this I created a mood board. I then researched into lighting and then I created a scene to test the lighting research out. I found that the mind maps and mood boards were straight forward and just part of the pre-production and I am happy with the final outcome, creating the level to test the research was also went well with me as this helped me understand what was happing when I changed settings from reading the research. I felt that the research didn’t go that well for me in this task, I found that it was quite hard to understand, and a lot of technical information was given, using the project helped me overcome this and I found it easier to learn this way. research and project scene This was using what I have learned from task 1 and placing it into my scenes that I have built for based of my game design document and other projects along the way. I used my mood board to create lighting similar to this. I found lighting my own scenes challenging at times, but the rewards were great and deserve the work put into them. I found lighting night scenes and the indoor scene a lot easier as there is only one major source point of light / the light only can come from visible lights and this helped me a lot and I think this went extremely well. I felt that the day scene on my alien planet did not go to well and I tried to see if would work better at night time and I still did not like the lighting on this world I feel the map was to big and detail lights were getting drowned out and the problem with background meshes getting bleached by the light did not help matters either. I did not submit these screenshots for peer review as I felt this did not show my best work. CGI Render For this I created a video render of one of my scenes and then gather feed back on selected screenshots about how might lighting is in the scene. This task went okay, it was only a small task and I learned a lot about how to use the sequencer in unreal engine which can be a useful tool for me to use in the future and I getting feedback of peers other than a small selective group at the collage was also benficial. I felt the peer review I got back from the public forums was great, I didn’t get much feedback, and this didn’t help me understand how to get my lighting better, although in hindsight this could be seen as a positive as people couldn’t find much to fault with my peer review screenshots. Common lighting errors Also, to my evaluation I will talk about the common lighting errors that I had during my process of creating lighting in my scenes Black lines between meshes – I noticed on some of my indoor scene meshes had black lines between them after building lighting I look into what this was, and I found that this was a common issue with the lightmap resolution in game or a poor layout of the light map uv itself. For this it was just I needed to increase the resolution of the map and this fixed the problem. Red x over light – I saw this a few times in my scenes and this was down to having to many lights over lapping in a small space and telling the user that it will not use this light as it will cripple the performance of the game as it trying to render to much light in this area as it has 5 or more lights overlapping. Light bleeding though meshes – I had this error when I was building lighting inside and I had light coming though my planes, as it’s a plane it only has one visible face and the other side is black, unreal engine will cull the backside as it not need thus saving on performance, so if the lighting is come from this side it will shine though the mesh, to fix this error I needed to select the model and check use two-sided lighting or create a two sided material. Conclusion Overall, I really enjoyed lighting, I feel this is some I would enjoy going into in the industry. Although getting the lighting to nearly realistic as possible was extremely difficult I found it very rewarding and fun to achieve this and I really enjoyed creating night time and indoor scenes. I feel like I have learned a lot about this pipeline to lighting and although I found the technical side hard to understand I figured out using practical solutions and understood it better. The only disappointment that I had for this unit was the feedback from the public forums on my lighting screenshots I felt I could have done with more criticism to understand how to improve but overall, I fell this unit went really well and would enjoy doing this in a job as well as learning more about the sequencer. I felt that the time schedule for this part of the project worked really well I had plenty of time for each section of the project and was able to finish on time or before the deadline date. Task timeline Pre-production Start date: 1/2/18 End date: 7/2/18 I have given myself a week to complete the pre-production looking into creating a mind map, basic research into how states and game animation works, mood boards and flow charts. Basic character implementation Start date: 7/2/18 End date: 14/2/18 For this I have given myself a week to set up basic character implantation such as setting up the character to use the unreal skeleton, setting up basic walk blend spaces and basic state machines. Attacking and defending implementation Start date: 12/2/18 End date: 21/2/18 I have given myself just under two weeks to add a combo state using two different attack styles and a defending state as well. Boss and damage set up Start date: 21/2/18 End Date: 1/3/18 I have given myself just under two weeks to set up an NPC boss that can attack and dodge the player and also add damage so that the NPC and player character can damage each other. Pre-production For this part of the project I am going to look into the pre-production of character implementation, I am going to research about how to implement characters into unreal engine and then I will create a mind map and mood board and then look into other areas that can help me with later in the project. I created a mind map that looks into different types of animation that I would use for my character look at action type movement, attacking and defending and miscellaneous moving to add a unique look to the characters movement. After getting my ideas down on to a mind map I then created a mood board from these ideas so that I could visual represent what I wanted to achieve in this part of my project. I then did research into how state machines work and from this I ended up creating a flow chart to help me create my state machines for my character. character implementation For this part of the project is all about implementing the character into unreal engine using blueprints, animations, state machines and inputs. Combine these together I will have a player that can fight, move and interact seamlessly. I started of by exporting the third person character an I opened this up inside of Maya, I want to use the third person skeleton as I plan to use animations that are based of this skeleton. I then imported my character I wanted to use and bind the skin like I did in unit 74. Once everything was in unreal I opened up the third person skeleton and went onto the retarget manager and added a humanoid rig, I then opened up the player rig and did the same with this one. I then selected all my animations have retargeted them from the default skeleton to work with my skeleton, as these were identical skeletons there was not much needed to change the targets or poses. After this I added the sockets to the skeleton and previewed the meshes on them, so I could line them up correctly so that they fitted in the correct positions. After this I added a sheath version of the socket for when it was not equip. I then went to the character and added these models to the character and attached them to the socket. I had one equip and one sheath, I made the equipped ones invisible. After this I made 3 blend spaces for 3 different idle to runs, one for normal walk no weapon, one for the shield and sword walk and then another walk for the sword. I then added states in the player animation blueprint that starts off with the default idle/run and then depending on which Boolean variable is set from the character blueprint the other two walks is selected. I then went back to the character blueprint and used some Booleans and branches to set up visibility on the meshes so when the character is drawing or sheathing a weapon the in-hand weapon is set to visible or not visible depending on the action and visa versa for the sheath weapons. I then used Booleans to determine which weapon was activated and when the other weapon is selected it would put that weapon away and equip the selected weapon.

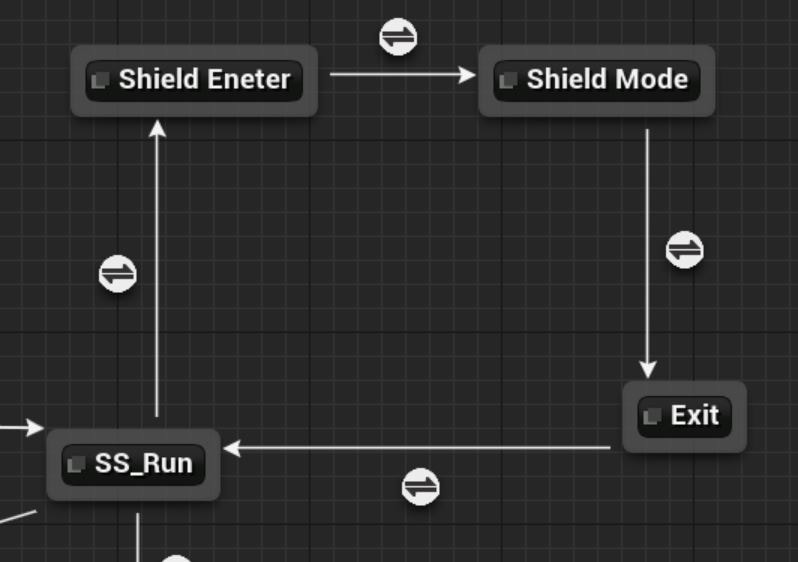

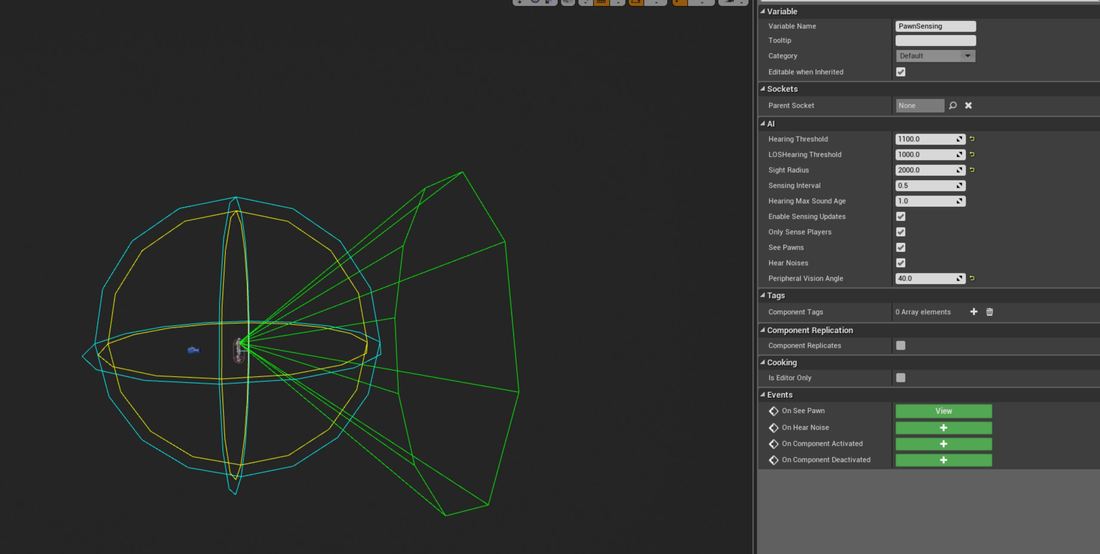

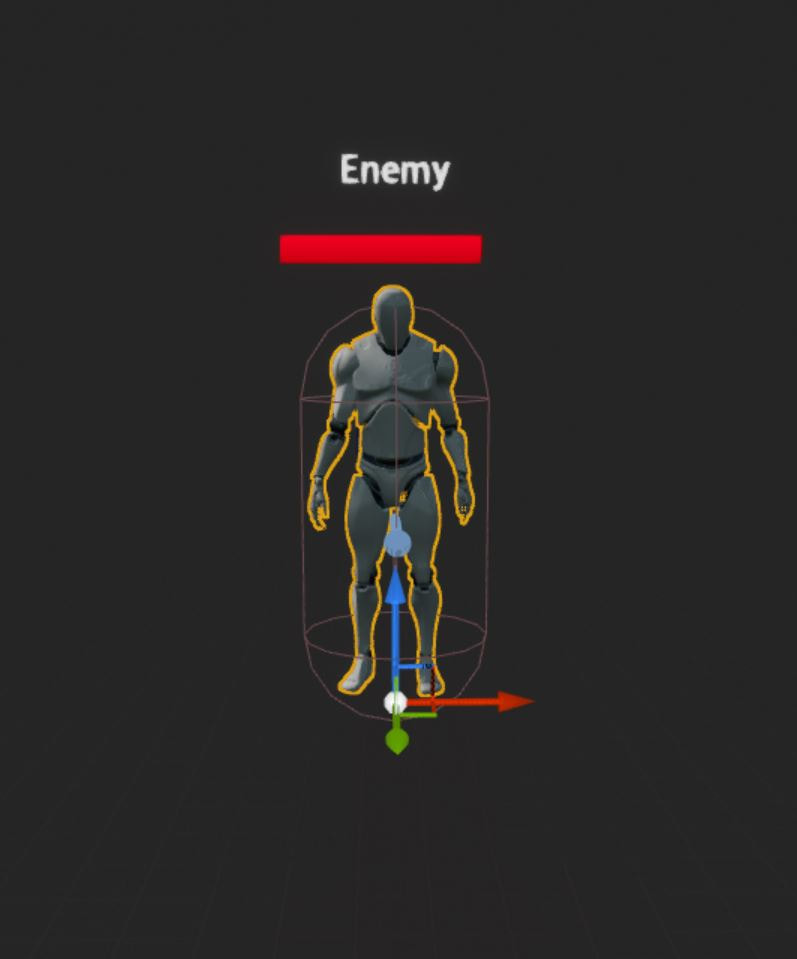

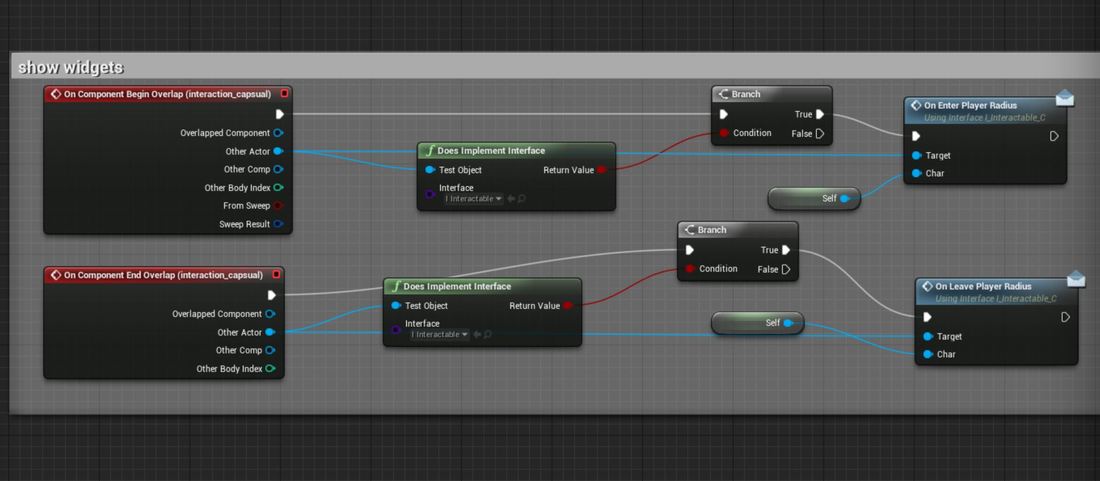

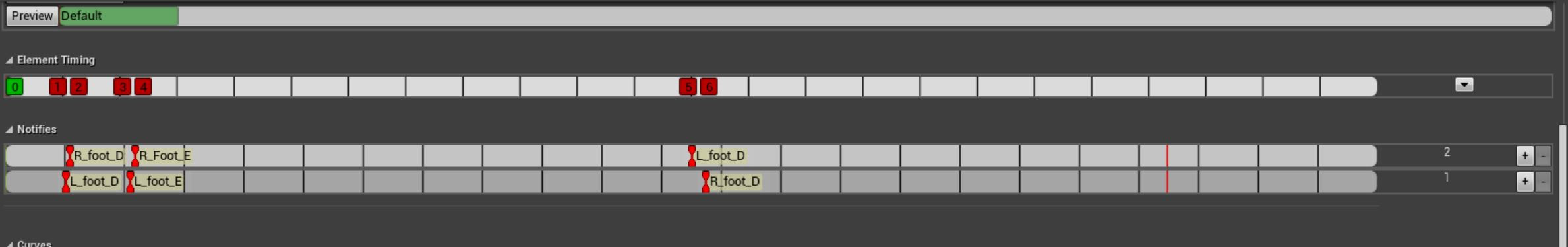

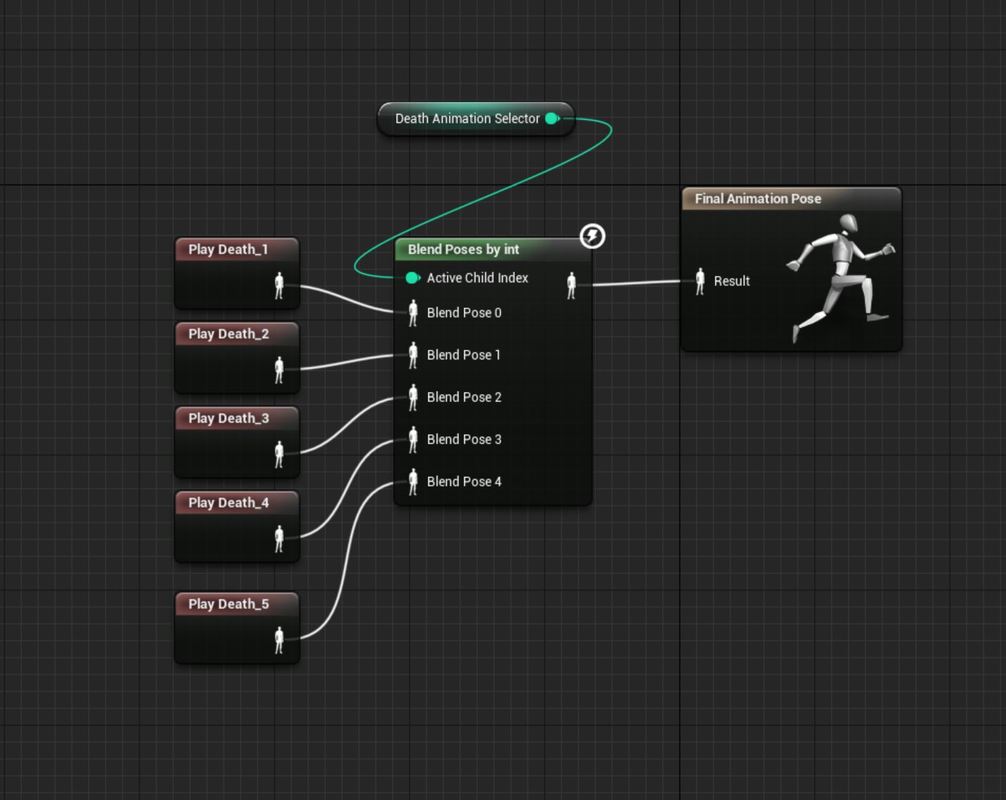

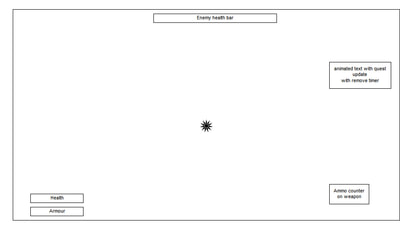

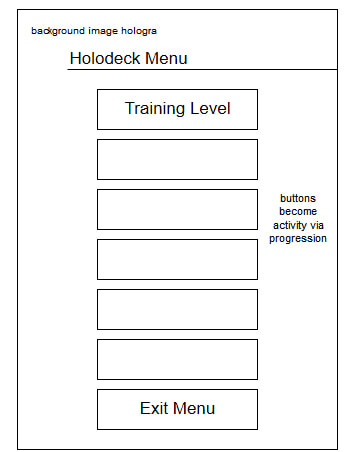

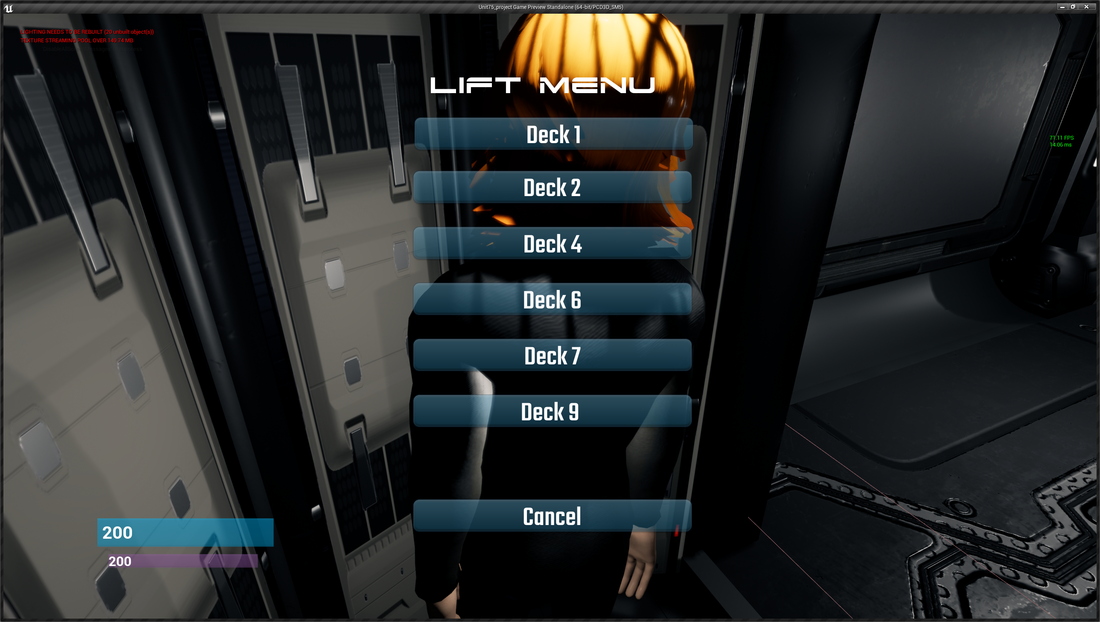

Attacking and defending implementation I started off by selecting the animations that I wanted to use for my attacks for both weapons, I then right clicked on these and turned them into an animation montage, I wanted to use animation montages because this allows me to take control of the animation and control when I want the animation to play and stop using blueprint scripting. It also good for when I wanted to loop an animation to blend into multiple ones. I then needed to set up the attack, I used the left mouse button and ran a condition checks to see which weapon was equipped and that the player is not switching weapons, then I sent another condition to see if the player can attack. After these checks I placed a switch on integer and created an integer variable and set it to one. I then added to custom events for attacking and resetting the attack. For the defense I wanted to get a blocking I started off by creating a multi-directional blend space and in this I added a forward walk, backwards walk and a left and right strafe and an idle position to blend these animations from. Depending on the direction and speed the character is moving they will blend into the direction and speed. After this I went to the animation graph and went into my motion state and where I have the sword and shield run I added three more nodes to enter the shield mode, the shield mode itself and exiting. For the conditions I then added a Boolean that is blocking and is not blocking. In the shield mode I then added the new blend space and added the speed variable and created a new variable for the direction. I then set these in the event graph using a calculate direction and get the velocity from the vector length and then for the based rotation the actors rotation. In the character blueprint I then added a new condition for blocking and created an on and off with the on been reversed to the off side. After this I either set blocking Boolean to enable the blocking state in the animation blueprint, I then set the max walk speeds to make it slower in blocking mode and then I add an orient rotation to movement from the character movement, this disables the rotation movement so that the character can strafe. I then added a use controller rotation on Yaw axis, this rotates the camera when the player is turning. Error I then tested the blocking function out, and I noticed when I blocked and walked that my player would walk and glide. Below is a video of the error After looking into this error again I realised why the player was gliding, I was calling an idle animation before and after going to the blend space, the blend space already has this inside of it so it did not need to be called, the glide was because the idle animation is a still player for 3 seconds and will glide till the animation is over. To fix this I just removed the entry and exit from the motion graph. Adding unquie features After creating my attacking and defending I looked into how I could improve my attack system I implemented the secondary attack system that I wrote about how I could improve my combo. When the player is now attacking and the player presses the secondary attack it will find which combo has been played and play an animation equalled to that attack combo and play an unique animation. After creating my primary and second attacks I wanted to add unique features to them to add more feedback for the player, one of the things I noticed is when I walk and attack the player slides on the floor, one of the ways I can fix this is by adding a type of force that pushes the player along the floor but gives the impression that the player is moving while attacking giving great form of feedback. I did this but creating a custom event and setting the character movement ground friction to 0 then I set a node to launch the character, I pulled a pin from the velocity and got the actor forward record and times it with a float which would be the amount for launch the player at. I set these amount after each animation montage in the graph. I then added a new notify to the player attack animations called launch and set it before attacks when the first foot of the character touched the floor. I then set it up in the animation blueprint like I did for the combo attacks. I then tested out I could see if the player moves with out direction input, by just clicking the mouse I could see the player launch into the attacks. After got this working I duplicated it and did the same for jumping but using a actor up vector node and set it on certain attacks where it looked like the player was jumping into the attacks. I then investigated unique ways of adding different features to the character, I added a dodge roll so when the character is not attacking they press the f key and the character goes into a roll and can potential miss an attack form the enemy. I then added a camera shake, when the player attacks with the special attack it shakes the camera to give the impression of a big hit. After this I then added a zoom function which uses a timeline to make the camera change from 90 field of view to 70. I have not found a use for this but I feel it will I will find a use when I get to defence and make the camera zoom in on a block. For this part of the project I want to create an NPC that will sense me and then find and attack me using different ranges (close and far) depending on the range it will decided which attack to at random. I started off by duplicating the third person template so that I had a clean character to work on but had all the basics set up like animation blueprints, blend spaces etc which later I can change into what I want as these are just place holders. Once I had the template I opened it up and added a sensing pawn, this is so the pawn can sense the player when he is near, I can also add hearing to this and set it up in the future so when the player walks or fires a weapon it will know its last location. I then added a function where it will check the distance of how far away the player is away from the NPC and till I gets to certain distance it will perform long range attacks and once it enters that radius it will switch to close range attacks. I then added a float on random and set it between 0 and 4 this make the NPC select a number between 0 and 4 and I set it if that number equals 0 it will perform the long-distance task. This will make the NPC run more and attack less and then to add randomness on the attacks I added another integer in random which is connected to a switch on int and the random number selects the attack. Setting up the health For the enemy I wanted to create health bars and make the visible to the player, instead of having a default one at the top of the HUD I thought it would be different to have an enemy health bar above the character that displays when the character is in a certain distance. I started off by creating a widget and setting it desire on screen and added a text of the enemy which can be change via having it as an editable variable and a progress bar that shows the enemy health. After I created the widget I placed into the enemy blueprint and placed it above the head. To get the widget to display when the player enters a radius of the enemy, I created an interaction blueprint and created 3 functions: On enter player radius, on exit player radius and on interact which will be used later when I’m creating interaction such as QTE etc I then added a large capsule Collison box to the player character, this will work as the Collison to display the health bar. In the enemy blueprints I used the new interaction functions and called these and then set the visibly to show and disappear on the widget. In the character blueprint I used on enter and leave nodes and made sure it equals to the interaction blueprint and then call the function with would trigger the enemy blueprints to display the widget Below is a video of the widget displaying the health NPC: Adding to the attacksAfter setting up all the basic mechanics for my NPC I wanted to add to the attacks so that when the NPC fires an attack it will damage the player and reduce the player’s health per attack. I started off by going thought all the NPC animation montages and added a new notify for each hand / foot to enable and disable. I added the disable notify to make sure when it is fired that it starts off and then turns on.  Once I had all the notifies set up, I then created custom events in the enemy blueprint and then I attached these custom events to the notifies in the animation blueprint. After this I then went to the enemy blueprint and added four capsule collisions around the players foot and hands and then attached these to the sockets of the respectable positions and then zeroed out / placed them. In the NPC blueprint I for each custom event I then reference each capsule and on enable I set the collision and then on disable I disable the collision. I then set up for each collision on overlap to check to see if it the player character and then print a string to show it has been hit. I wanted to add a visual reference for when the NPC hits the player, looked into a few ideas of having like numbers floating on the screen, or comic book style words like pow, pop etc. I then looked into creating a hud that flashes on damage. I then decided to create a sprite that shows when the character is hit. I started off by duplicating the fire particle effect and removing certain parts of the particle so only the shockwave part of the particle effect is showing. I then went back to the players’ blueprint and created a new custom event and added a sphere trace for objects that will spawn to check to see if the player is in range. I then got the collision box and used the location to spawn this trace for object. I then break hit result to check to see if the player in the collision box if true I then spawn the emitter at the location. dodge After getting all the basic movements working on my NPC, I wanted to little features to make the enemy more realistic. On my distance check I added extra pins to the close range so that it would choose 4 different dodges, then changed the random probability so that It would randomly choose between attack or dodge. This will give the player a bit of breathing space and also give the option for the enemy to go back to its chase / long distance attacks. projectile I wanted to create a projectile that can be thrown at the player, I created a new blueprint actor and added a sphere mesh and collision box. I then added a rotation movement and projectile movement this will make the sphere rotate and move to words the location. I then went to event graph and used the player location as the fire location and then used a collision overlap to check if it is the player hit, if it did it would cast a blue particle effect and if not it would spawn a red particle effect. Future I plan to make this better in the future as I want the projectile to look like a grenade than rather the a straight line fire and gives the player better change to dodge the attack than instantly getting hit by it. Debuffs I then wanted to add debuff on the character when the NPC uses some attacks. I started off with a simple one where the enemy changes the speed of the player. This give the impression that the character is dazed after a heavy punch. I used a custom event and used the character movement to a lower effect and then after a delay I resort it to its normal speed. Future To improve this, I could look into adding like a post processing effect to add like a dazed or spinning effect. I could do this by adding a post processing effect in the character blueprint and just set the visibly when the debuff is active. The second debuff I added was a burn effect. I wanted to add this when the plasma bomb hits the player, I added a new collision box into the character blueprint. I then added a custom event and then I set the visibly of the collision box and added an spawn emitter which I attached to the collision box, I then added a delay for how long I wanted this to be visible for and then change the visibly of the collision box. I then created a new blueprint actor for the fire debuff. I added a collision box and fire particle effect in this, I used simple code to display the fire if the collision box was overlapped, this will spawn the fire if it hits any object or the player. If this hit the player it will spawn the fire on the back of the player. I then went to the projectile and added a custom event to spawn the fire debuff blueprint this will spawn the fire debuff at the location of impact. Below is a video of the above added to the enemy ,For the minion I started off by creating a new character blueprint and added a character created and animated in an earlier unit, I then added a sense node so when the player is in this radius it will trigger the behaviour tree. the behaviour tree it uses a selector branch node that choice between the two if it doesn’t see an enemy it will find a random point and move to it and wait. The other node is an selector node that checks the distance if it meets a distance it will move back to it start position, the child branch underneath run the distance check and if it see the player it launches the attack and follow the player. I then created a blackboard and added various variables that will be used in the character blueprint, to set the variables that will trigger the behaviour tree, the other variables will be ran in service, task and decorator blueprints that will be part of the tree branches that will check conditions. The main conditions for the behaviour tree is that it will attack the player on sight or if hit, the NPC will set once a certain distance is met between the player and NPC and it will return it starting position, using location variables to wonder from it start location and finally triggering the death animations. Task 2 - Damage and DeathIn this task I want to create how the player will damage the boss and how the player will receive attacks from the NPC. I started off by going to the player blueprint animation and creating a new state, in this state I want to have multiple death animations. I added all the death animations to the state and then I added a blend poses by int and then promoted the variable to an integer. I then went to the event graph and called the variable and added it to a random integer in range to randomly select an animation. I then created a Boolean variable in character blueprint and called it death and repeated the same in the character animation blueprint and set it up to when the player dies it sets the animation blueprint variable. I then set this variable as a condition on the state so when it is triggered it plays the animation. To trigger damage by the players weapons I set up the same process of what I did with the NPC and added a collision box to the weapon and then added notifies on the animation montage to enable and disable the collision box. I then a min and max damage to each of the attacks and put in numbers to suit each attack. I then added a begin overlap for each collision box I added on the weapons that then gets the apply damage and uses a random float in range and uses the min and max damage that is set. I then added an event any damage, this is used to take health of the player when damage has been applied to the character. I then ran a condition to check if it is the character and then I use armour that takes away from the current value. When it equals 0 it starts to take from the health. When this reaches 0 it triggers the death variable. For the shield damage I don’t want to make the shield give damage but to absorb the damage, to implement this I added a condition in then damage taken nodes before the character health / armour is taken it will check to see if the player is blocking and then check to see if the player stamina is above 0 if not it will take the damage from the player’s health and armour. I then player a custom event that plays an animation montage of the player been hit / knocked back and then spawns a hit sprite for visual references. Each hit then subtracts the damage given from the stamina till it reaches 0 where I will then start taking it from the player’s health or armour. Below is a video of the shield taking damage. Ultimate AttacksTo add onto my damage, I want to create 3 ultimate attacks one for each mode that the player will be in, I will look at making a whirlwind effect for the shield, a high damaging sword attack for the two-handed sword and a healing mode. I started off by creating a new actor blueprint and adding two baton meshes into the blueprint and used the character as reference, I then increased the size and added a collision boxes to the batons. I then added a rotating component to make these spins. After this I removed the character reference. I then made a weapon trail using a particle effect and changing the colours and making sure no velocity was added to the particle. Then tested it out. I then added a particle effect on the animation montage to show it been active and when to hide and show the battons. Two Handed Sword I then repeated the same process for the two-handed sword attack. I used the player character as a reference and scaled up the sword, I then removed the physics so when it spawns it will drop to the floor. I added a collision box around the sword. Special Attack For the last unlimited power, up I wanted to create an health buff, what I want this to do is drain the NPC health and then replenish the players health. I started off by duplicating the fire particle effect, I removed everything except for the embers, I then changed the colour to more of a healing colour, then I created a new animation unlimited to look like it is casting a healing spell. I then added particle effects to the animation notify, this is to play the animations for the healing effect. I then created a custom event in the character blueprint and I used the min and max damage to add health on my player when taken. I then used Booleans to active this custom event and to check to see if any other skill is active and to check if the player has more than 30 stamina and if it does remove 30 stamina and enable the healing spell. Evaluation Overall, I enjoyed unit 52, making a character come alive in the game engine. The complete process was interesting, learning about how to take my character add animation to it and then call actions on these animations to perform certain actions. I also learned how to do this by using the state machine using conditions and using a montage that where called from the character blueprint.

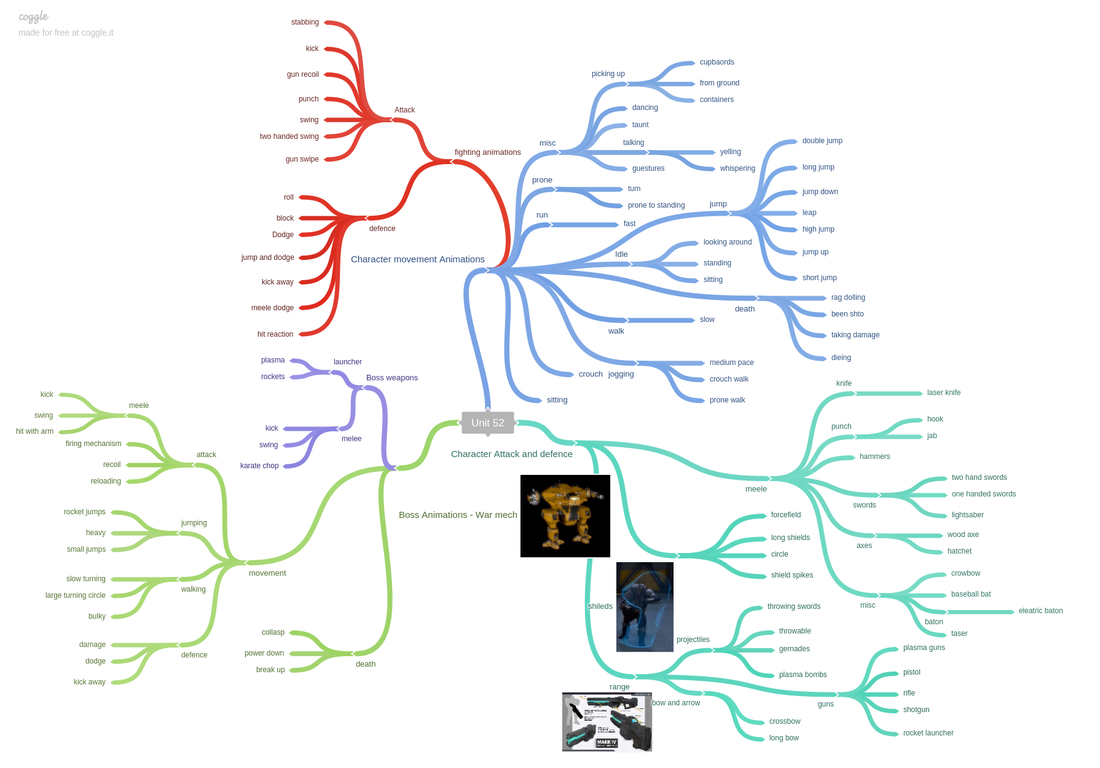

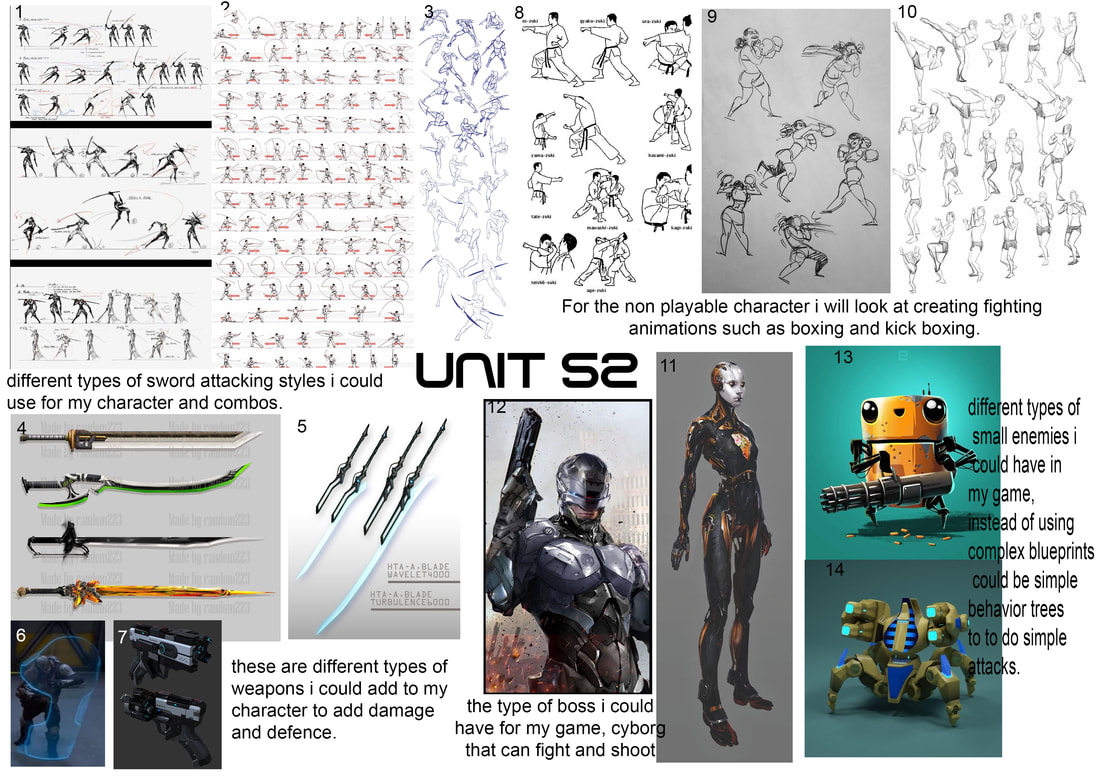

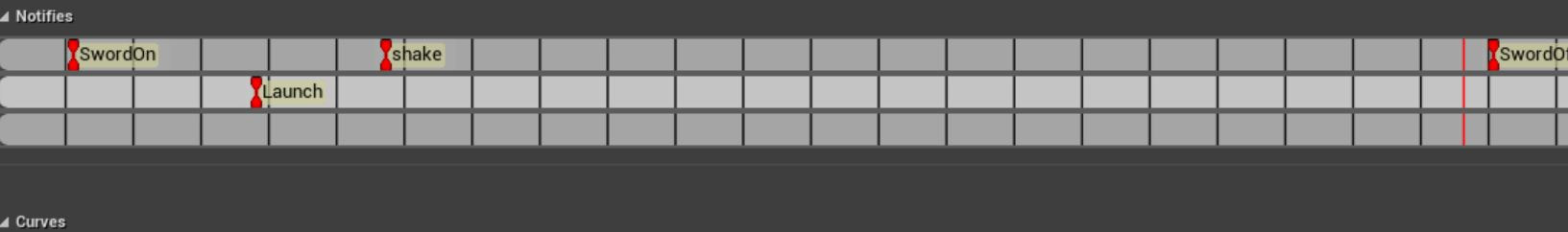

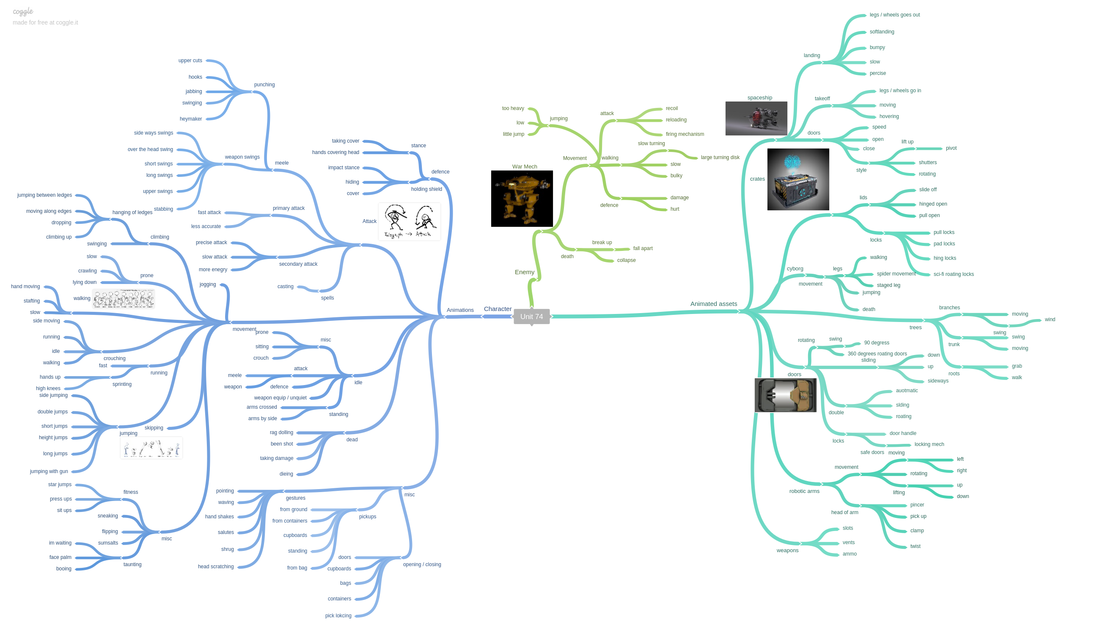

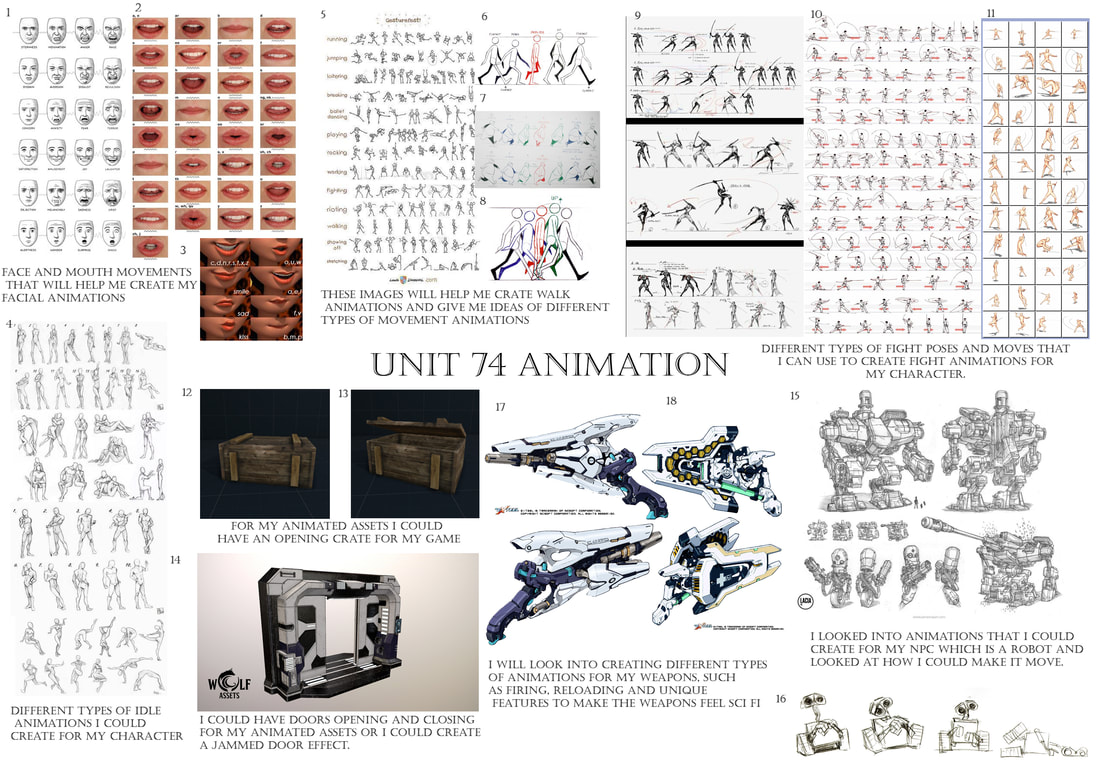

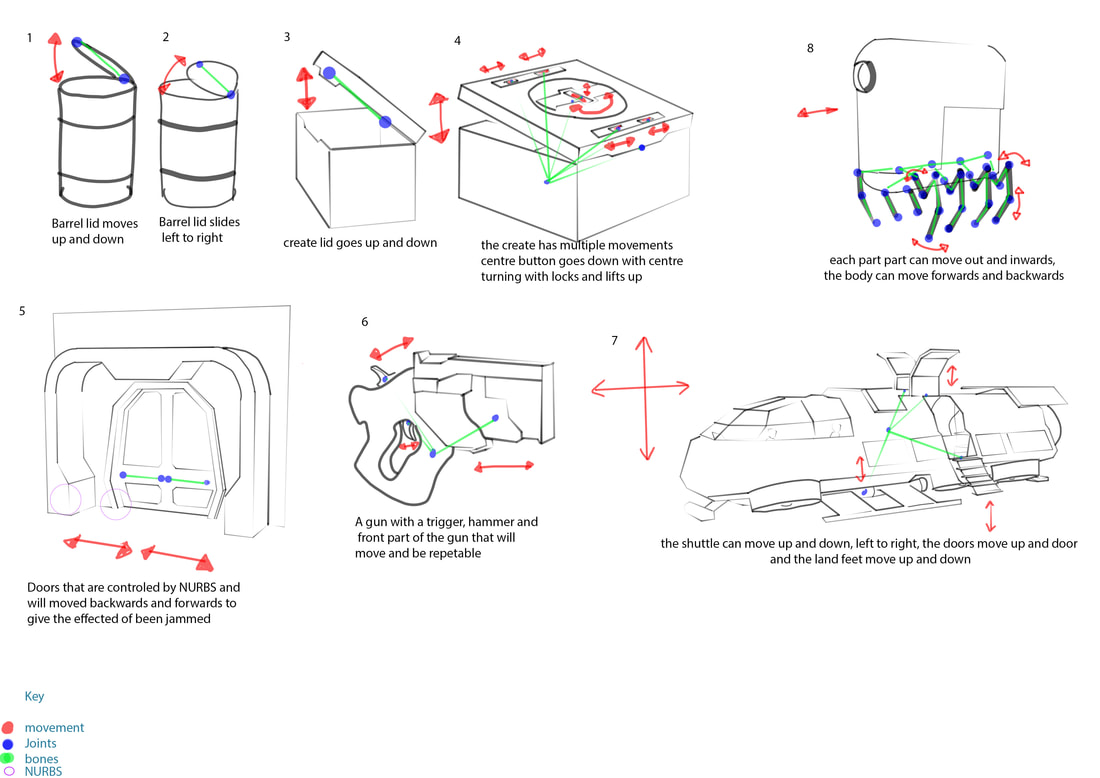

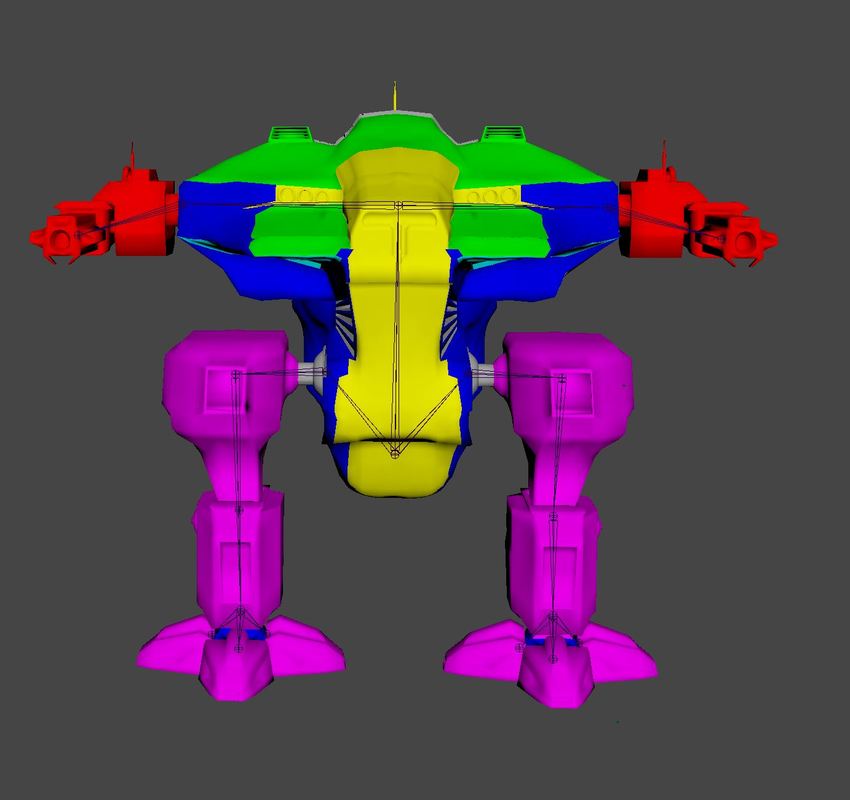

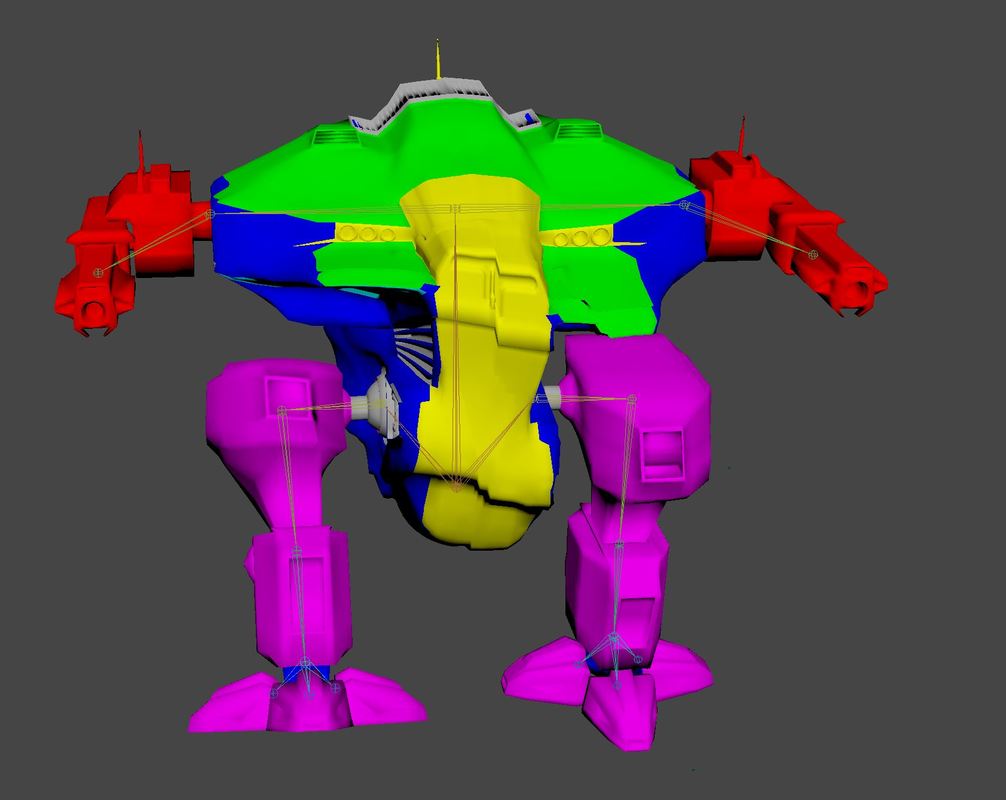

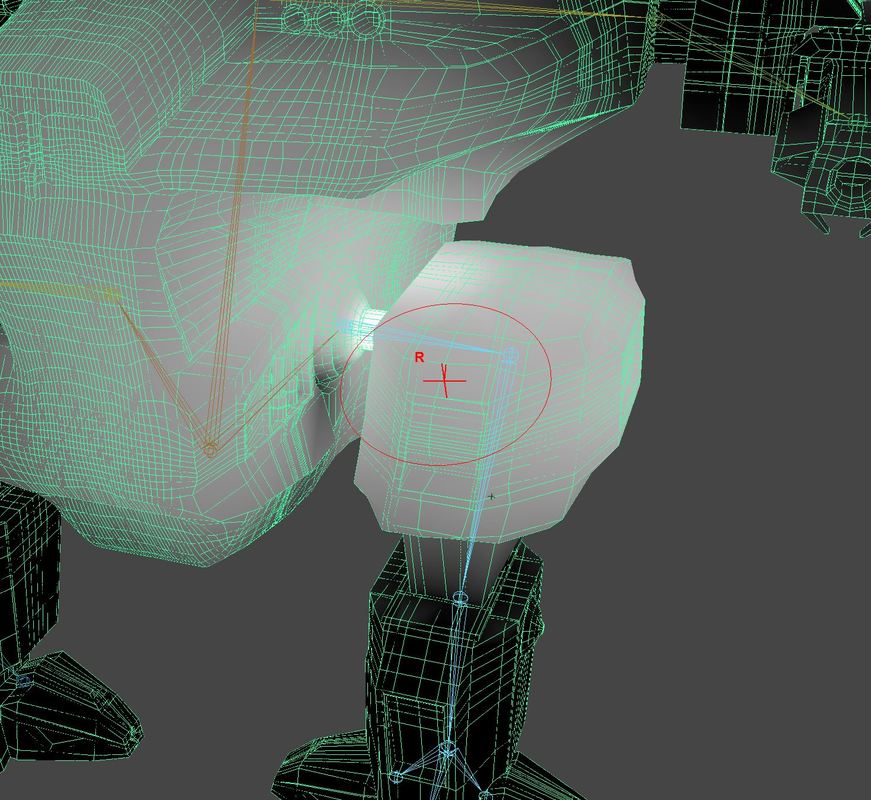

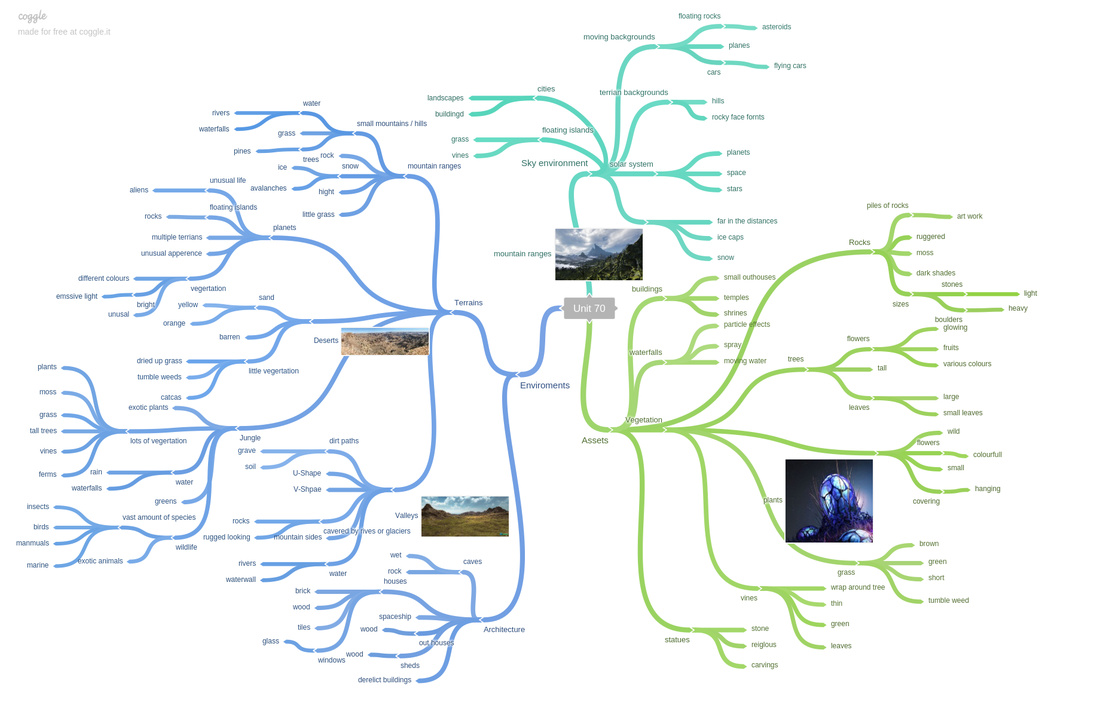

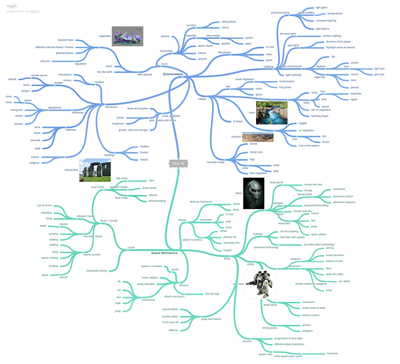

Overall I felt the first part of the project went quite well, for this task I created a mind map, mood board and looked into creating a flow chart for my states on my character animation blueprint. I also look into a bit of research to understand how this worked. For this task I have no negative points that didn’t go well. I made a few changes to what I created in my state flowchart, it was simple changes that I didn’t know about or could have done more research into. I also add more to the states in the animation blueprint than I had originally planned but this was down to adding extra features to the character. Overall I felt setting up the character implementation was the big task to this project, I had to create both the character and NPC blueprints and then add damage to both so that I could interact with and battle the NPC and he could battle with me two. For the charter I feel the whole implementation went well, I was able to add the UE4 skeleton to my own character and then import that into the engine and add marketplace animation to this skeleton, I feel this went well, I had to go between ue4 and Maya a few times to get the painted weights working. I had no issues creating the states for my character I feel the flow of the character changing from the run blend space to the sword and shield and long sword blend spaces worked well, I had a few issues with the equipping of the weapon and switching which I had to fixed by adding extra conditions and checking which was been used, after some time I fixed this issue. I also add a combo system this was straight forward once I had figured out how to use the notify system in the animation montage I was able to call events to trigger these animations from the character blueprint. I had a few issues with overlapping animations, but this was an easy fix by putting in a delay to let the animations finish before starting the next combo. I also had issues with the player going back to equipping the weapon state which took some time to figure out as at the end of every combo it would play this animation. I ended up fixing this problem by adding an extra condition in the state. I have problems blending the animations with a walking animation. I wanted the upper body to attack and the lower body to walk, I was unable to do this, but I used a different system that launches the player forwards when attacking giving the impression. This is something I will look into in the future to improve on as I feel it is a standard in most games now. The next thing I feel that didn’t go to well was the blocking, I had a lot of issues trying to get the player to enter the blocking and exiting encountering a lot of gliding, this was down to the animation states. I also feel this could be done better and I don’t like how the whole system works. I was able to implement a basic damage system using collision boxes that turn on when a animation notify is trigger from the character blueprint. I feel this is an old way to doing damage but is a quick and simple way to do it. I am going to look into new methods of adding damage via the animation montage and create a custom notify. Overall, I think the NPC turned out well, I was able to create a custom boss using blueprint, that used a range checker and from this range checker it decided which attack to use. I felt this worked really well in the game. I am also pleased with how the minions came out as these uses a different system to the boss by using behaviour trees, it needs a little work on it when the player attacks it but this is minor work on changing variables. I had a few issues with setting up the collision boxes for damage as this was a punching enemy it would damage the player multiple times, I fixed this by using a do once, but I will look into the above method for damage on this. I also feel like the grenade doesn’t work how I want it to and is more like a homing missile and gives the player a better change of dodging. I really like how the NPC looks after upgrading it to 4.19 and using the paragon addons to create an AAA game boss that taunts the player and uses partial effects on attacks. Conclusion Overall, I feel like 52 went extremely well, I have learned a lot from this unit about how to implement a character and it has taught me a lot about the engine and how to use new features in the engine. I feel after doing this unit and learning how to create a custom character and implement all the animation and attacks as well as the boss I feel like it is something I could be interested in doing for a job in the industry. I also has taught me how to problem solve and I feel this has improve a lot for me, when I was stuck I would try different methods to get past the problem and if this did not work I would research the problem to see if I could find a fix. Future improvements Two blends spaces – legs walking with upper body attacking Notify damage in the animation montage New blocking system Improve the grenade – less homing missile like The purpose of this unit is to understand how create animations for characters and assets using Maya, look into rigging a character and then creating a lip sync video. For task one it is looking into creating different types of animated assets, for task two is creating lip sync and character animations. Pre-production Start date:25/11/17 Completion date:1/12/17 I have given myself a week to look into pre-production such as mind maps and mood boards, looking into animation research and looking into how to rig animate characters. Animated assets Start date:30/11/17 Completion date:4/12/17 I have given myself just under a week to create 8 animated assets. This should be a quick process as all the models are pre-made. Lip sync Start date:5/12/17 Completion date:21/12/17 I have given myself just over 3 weeks to create an lip sync video based of a video from youtube, this will take a large portion of the time frame as it is a big task to copy the actions from the video. Character Rigging Start date:28/12/17 Completion date:3/1/18 I have given myself a day to complete a character rig so that I will be ready to animate. Character Animations Start date:3/12/18 Completion date:12/1/18 I have given myself 10 days to complete 5 character animations. The timeline for this project has taken into account the Christmas holidays and has been fitted around the holidays, making this task schedule tighter. Pre-ProductionIn this blog post I will look into the ideas of the animations I want to create for my character such as movements like walk cycles and then consider animated assets I could use following my unit 75 brief. I will then create a mood board to get visual ideas of how to animate my movements for my characters and assets. After this I will produce digital sketches to highlight bones, NURBS handles and the movement paths. I started of by creating my mind map consider character movement animations and general animations I could use for my character, I then look into different types of animated assets I could use in my game and I quickly looked into the animations I could create for my boss NPC. Below is the mind map I created:

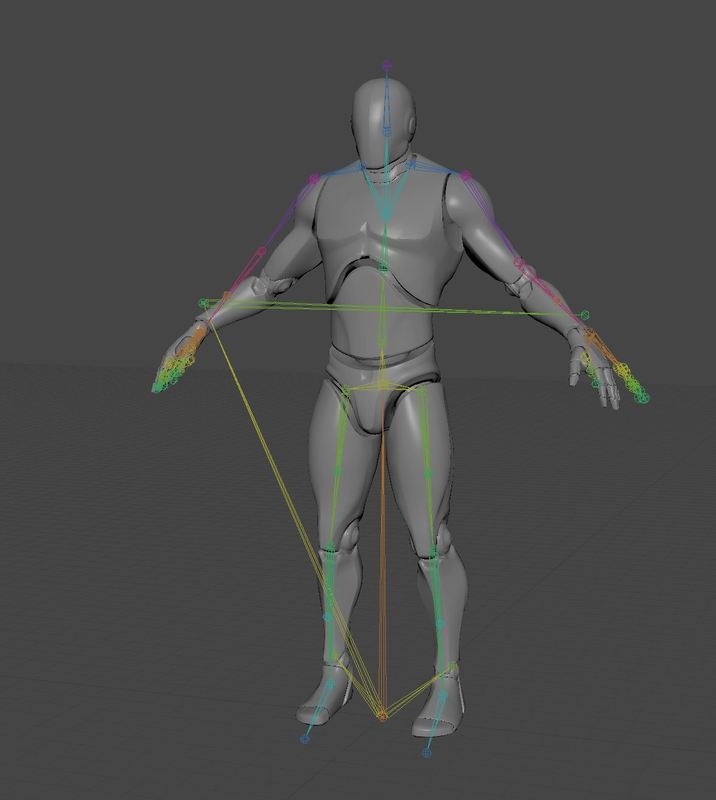

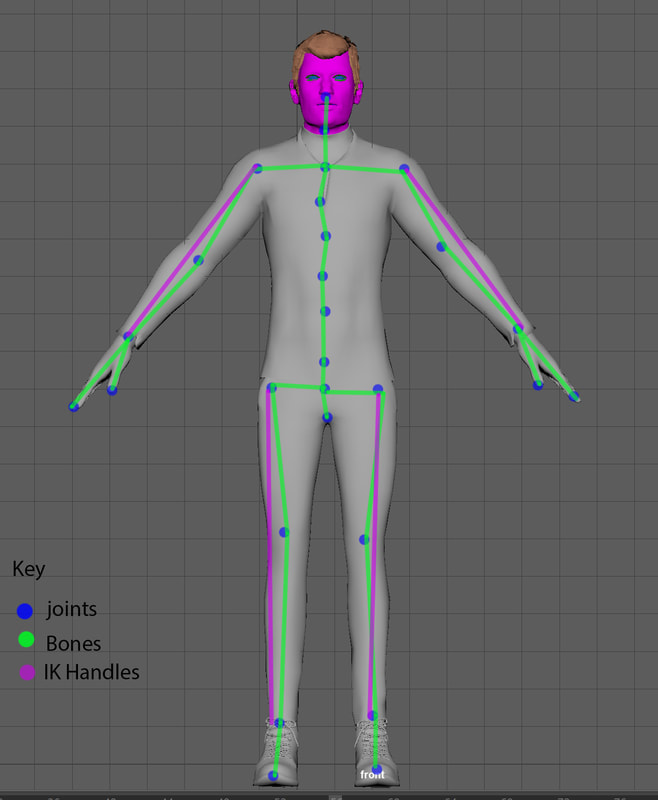

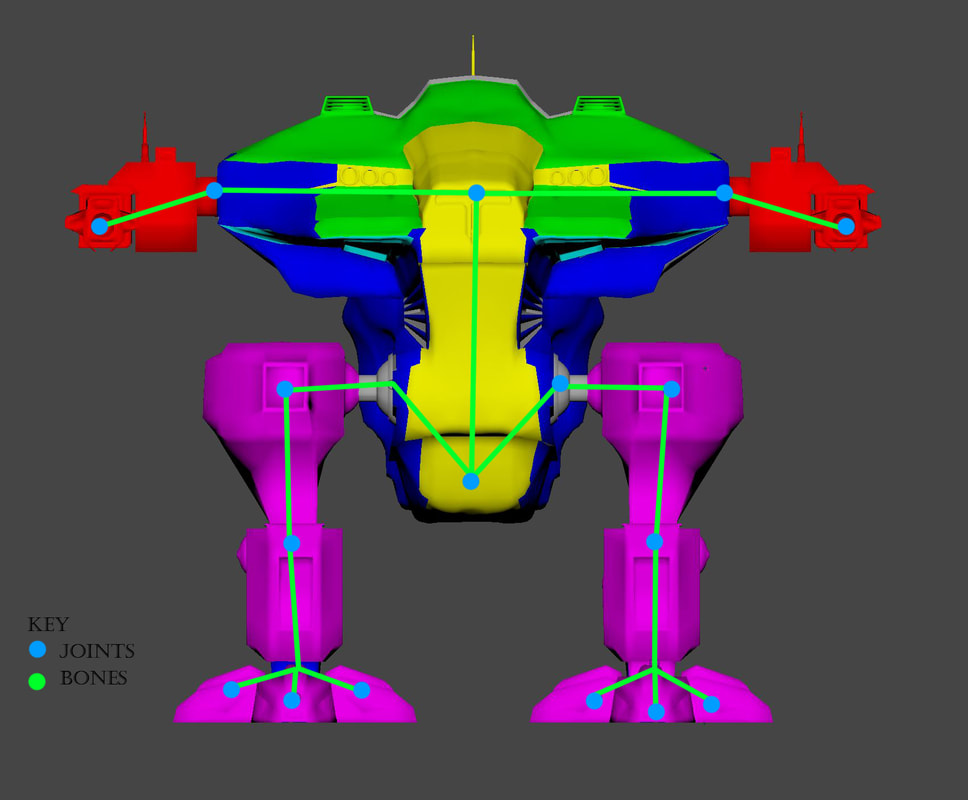

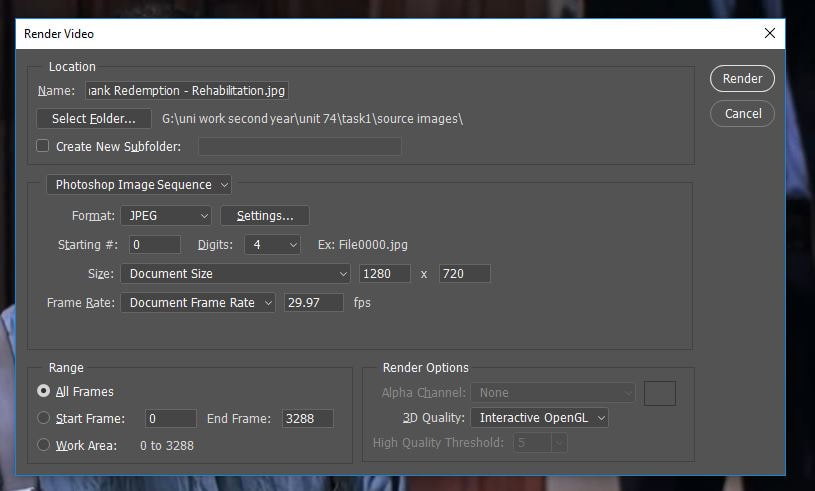

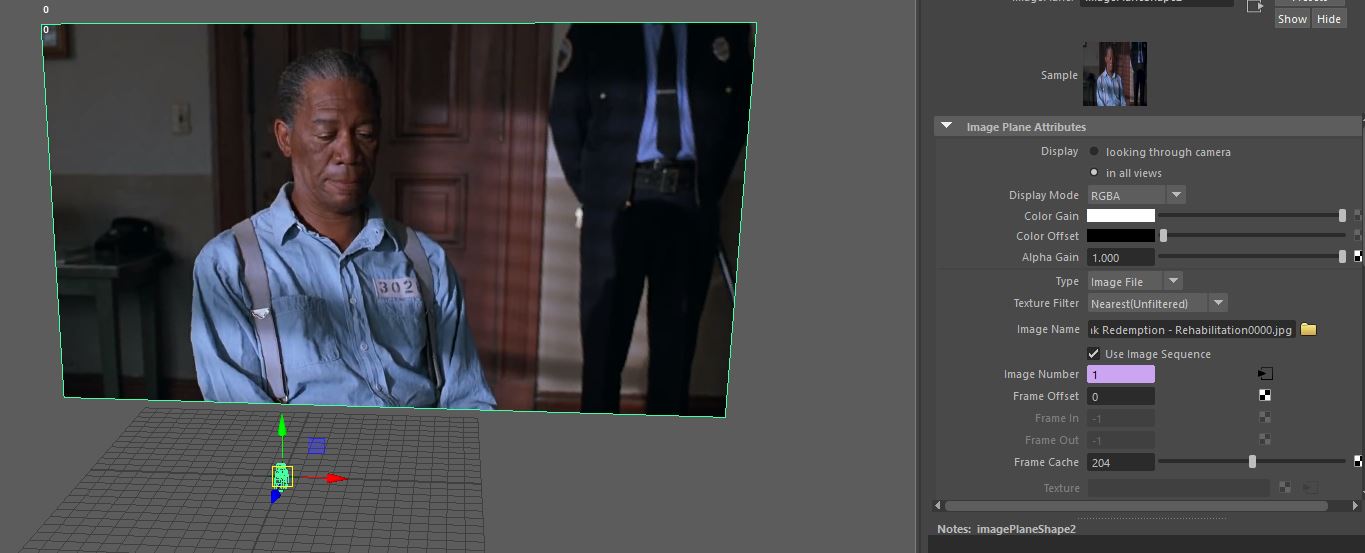

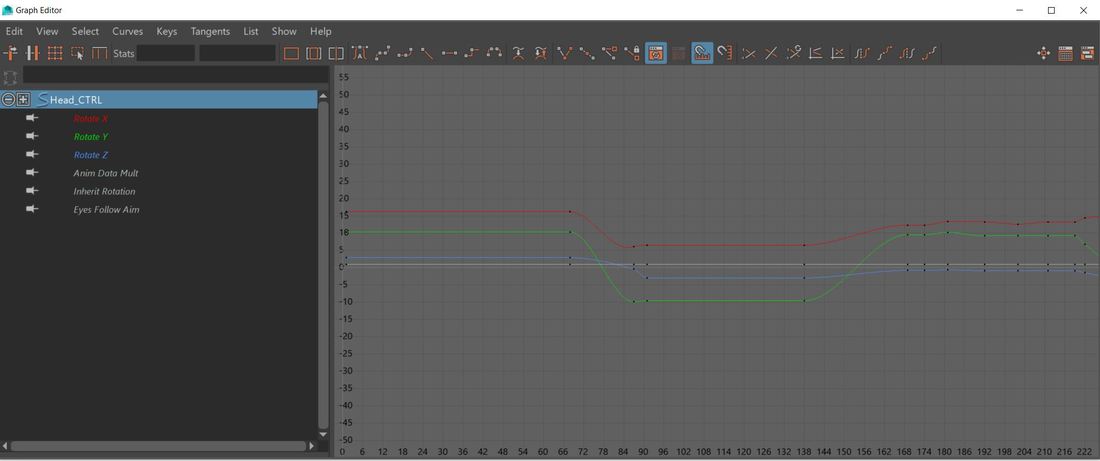

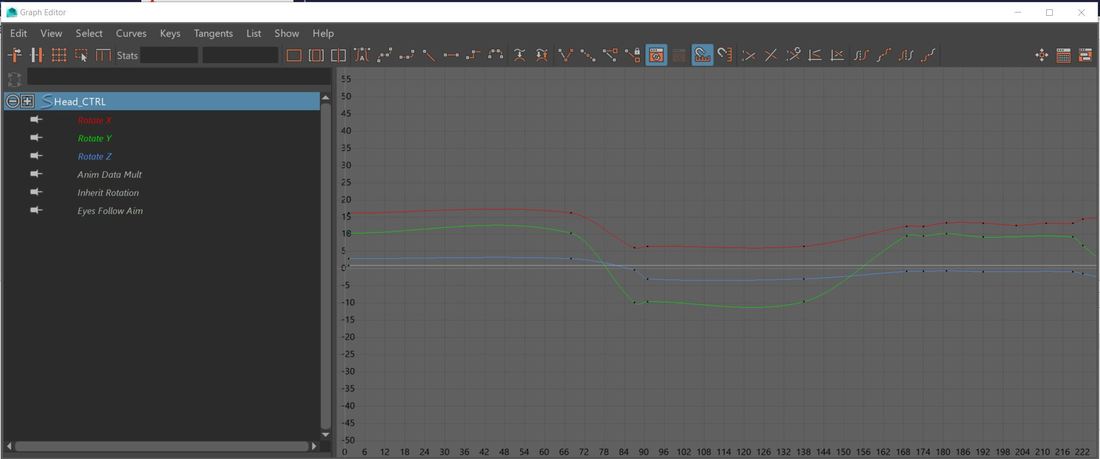

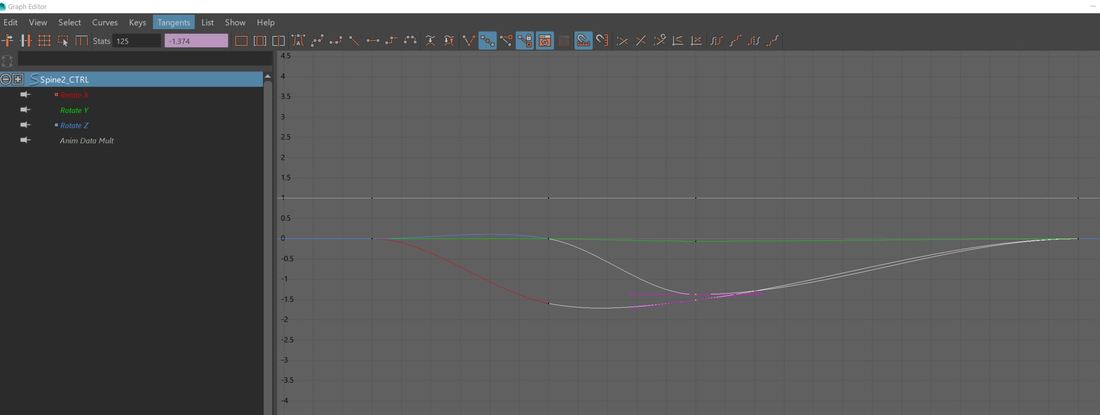

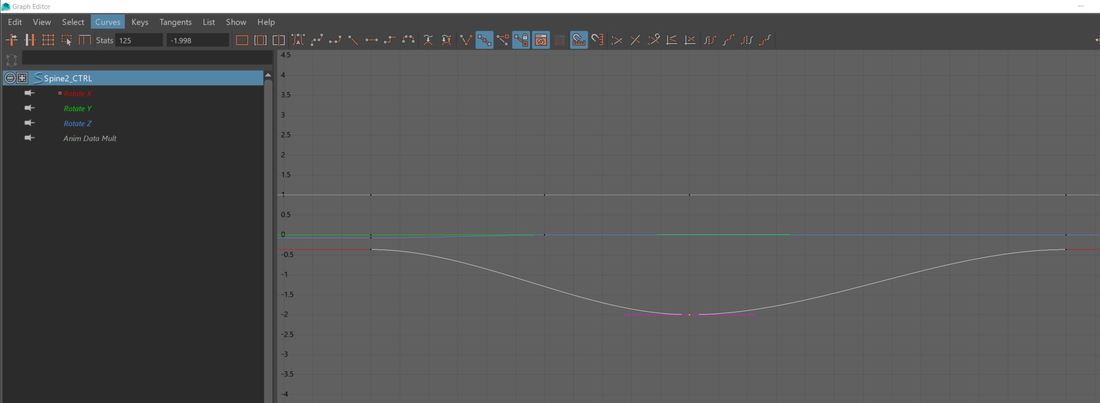

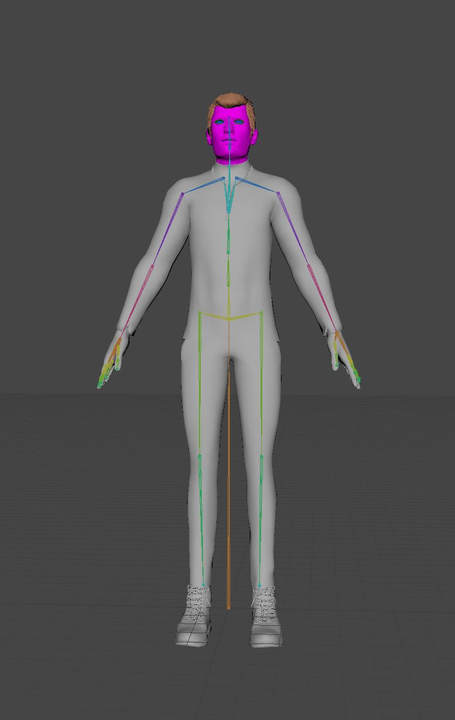

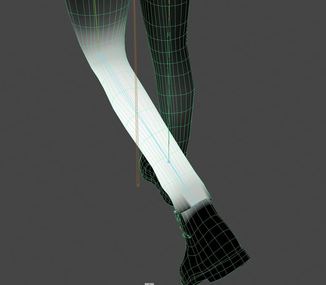

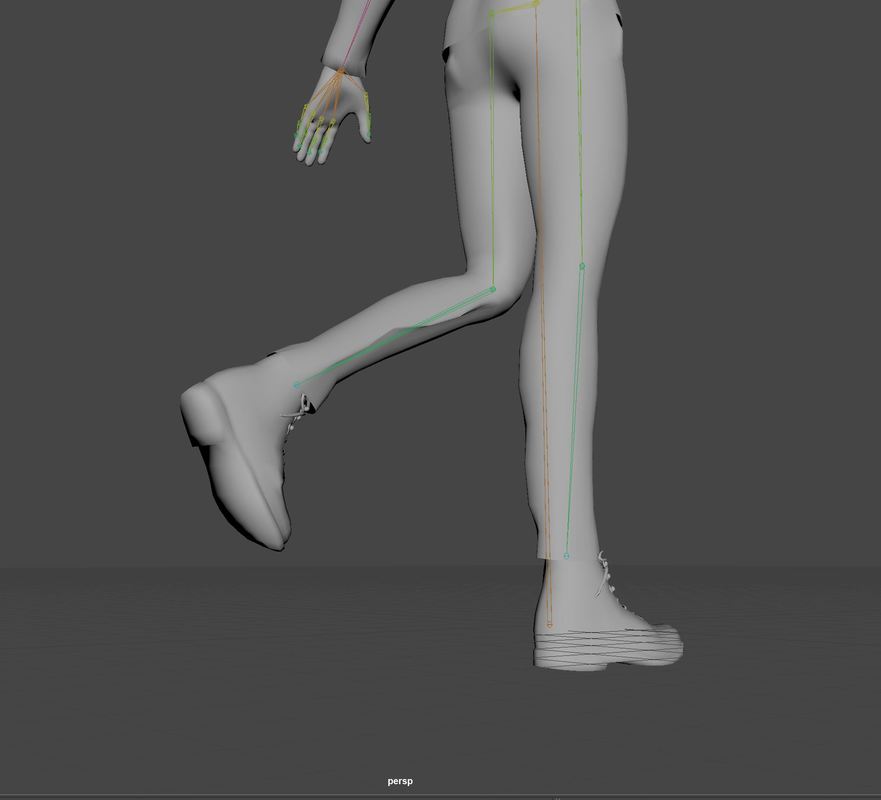

After getting my ideas down onto paper in the mind map I then wanted to get some visual referencing to help me with my animation, I looked into walk cycles, facial expressions, idle and movements, fighting and also looked into some ideas for my animated assets. I then drew sketches of the assets that I plan on turning into animated assets, once I got the basic outline of the assets, I then looked into where I would place; joints, bones and how it would move so I could get a visual reprehensive. I then repeated the process for my character bone structure. Task 1 - animated assets Another part of task one I had to create animate assests, for this I wanted to start of by learning how to add joints and over each asset I want to make it more complex. For my first asset I wanted to learn how to use the key frames, so I started off by crating a simple box with a lid. I started of by moving the axis point to the edge of the lid. I then press the S key to set the key at frame one I then moved the box lid to the side and downwards and then set the key when I got the lid in the place I wanted. I created a short video of how this animation looks: For the second asset, I decided to use a joint for this asset. I wanted to create a barrel opening and using the same process from asset one to key the movement but by the joint. Below is a video of the asset rotating Then I did the same barrel but with the lid sliding off For the next asset I wanted to add controls to the next asset, for this I wanted to make it easier to select the joints. Below is a video of the asset rotating For my next asset I wanted to have a more complex joint system using a root bone and have multiple joints moving and rotating Below is a video of the asset For the next asset I wanted to create jammed doors, I edit the graph to create a more stagged effect. For the next asset I wanted to combined the graph and multiple joints to create a weapon firing. Below is a video of the asset I then created another smaller weapon so that I had two different types of weapons for For the next asset I wanted to create a long animation that combines moving, multiple joints and graph For the next asset I wanted a more complex joint system, as it a complex skeleton I had to learn to paint the weights of the mesh to stop deformations. Task 1 - lip sync For my lip sync I wanted to create one using a character that I create by keying each of the facial movements by hand inside of Maya and then I want to look into using a camera to capture facial movements that is then transferred onto a 3D character. I started off by creating a character that I can use inside of Maya, I created my character using adobe fuse and then uploaded it to mixamo to get a skeleton and facial blend spaces. I then opened this inside of Maya and used the mixamo facial rig plugin on the character to add NURBS controllers to the face and body so that I can control the animation and set keys. I then went and found a video on YouTube that I wanted to recreate, one of my favourite films is the Shawshank redemption and I decided to do a famous speech of Morgan Freeman at his parole talking about rehabilitation. The clip is around 2 minutes 30 but when broken down the actual time Morgan freeman is talking is just over 60 seconds. I plan on making a scene in Maya and exporting a render of my character talking as Morgan and then edit the two clips inside of an edit software to make a seamless video. I started of by opening my video in photoshop, I only want the main part of Morgan freeman talking now so I cut it down to his bit only this part. I then went to export and selected render video I then chose image sequence in the drop-down box. This breaks the video up into images for each frame. I then exported this, I got over 3000 images from the video which is equal to about 1 minute 30 to 2 minutes depending on playing speed. I then went back to my scene in Maya and added a new camera to the scene I then went to the details panel and selected create an image plane for this camera, I then loaded up the first image of the clip. Then I ticked the box that says use as an image sequencer this will player each image at every frame rate. I started to key frames at key moments of movement. I decided to use a stop start type system when I would add a key at the start of the movement and the end of the movement, when it came to complex lip movements this involved a sequence of keys to make this happen. Clean up For the clean-up process I want to go though my recorded keys using the graph editor and change the smoothness of the keys and edit any movement that looks sharp or doesn’t fit the source image as I could of add extra during the keying the frames stage. I started off by going though the animation using the graph I changed the curves of the animation to be smoother and to make them flow better. I also added a tangent of linear to the curves as well. I then went thought the animation deleting and adding keys to parts of the graph editor that I felt need a smoother animation or need some extra keys to add to the animation. To do this I selected on the curve and right clicked to add a key to delete, I selected the key and pressed delete. Below is a video of the clean-up Scene and export Before I export my animation, I want to create a basic scene to give the impression of what is seen in the video I created a basic 3 wall room and a door which I then took into substance painter and textured them. I then went into fuse and created a prison guard like in the video. I then added different types of lighting to the scene to get the right balance. I found this extremely hard and did multiple renders before finally finding a lighting system then I was happy with; a directional light to light the scene up and then a point light to light up the character up. After exporting them I wanted to turn the images into a video, so I went to adobe premier and created a new project. I then went to import to import my images and selected the first one, I then clicked the box that says image sequence. If I don’t do this, it will import each image and add a 5 second duration to it. I then synced the edited video to my lip sync and played it, I adjusted it but there is a slight out of sync towards the end of the clip but only slightly noticeable. I then brought in the original video and edited that, so it displayed the start and endings and placed in parts where Morgan Freeman isn’t talking. I then created a picture in picture to see the original vs my lip sync. task 2 - character rigs In this task I want to create a skeleton for my character and non-playable character so that I can animate my own animations onto the skeleton and use these custom animations in my game. I started off by creating a basic skeleton for my character I then painted the weights to stop any deformations I then used unreals animation tool set to set up an NURBS control system. Rigging the NPC For my non-playable character which will be a mini boss in my game I have decided to rig that character myself without using any auto rigging tools, mainly for the reach as it is not like a normal biped and is quite unique. I started off by placing joints at those locations and renamed them according. I then parented those joints to the root bone and make sure the arms and legs followed the correct parent / child chain. Issue I then tested all my joints and I had a massive problem with the deformation of eacj joint pulling the mesh from all areas. I decided to look at the painted weights and noticed that the joints were pulling the mesh from all over. I fixed this by tidying up the painted weights by removing the white areas that I did not need by painting them black. After fixing the painted weights I then need to create some controls for each of the joints, I did this by using the NURBS and placed them at each joint and named the controls for each joint. I then needed to connect these controls to the joints, I started of by selecting the root control and parented it to the root bone. For all the others I selected the controls and then the joints and used a parent constraint for each of these. Animating the character After trying to use motion capture and unable to sync the two together I have decided that I am going to key my animations for my character. I am going to create animations that will be for my game only and will be unique to this game. To create this, I will use props I have created for my game to give me the idea of world space to help me create these animations: I am going to create the following animations:

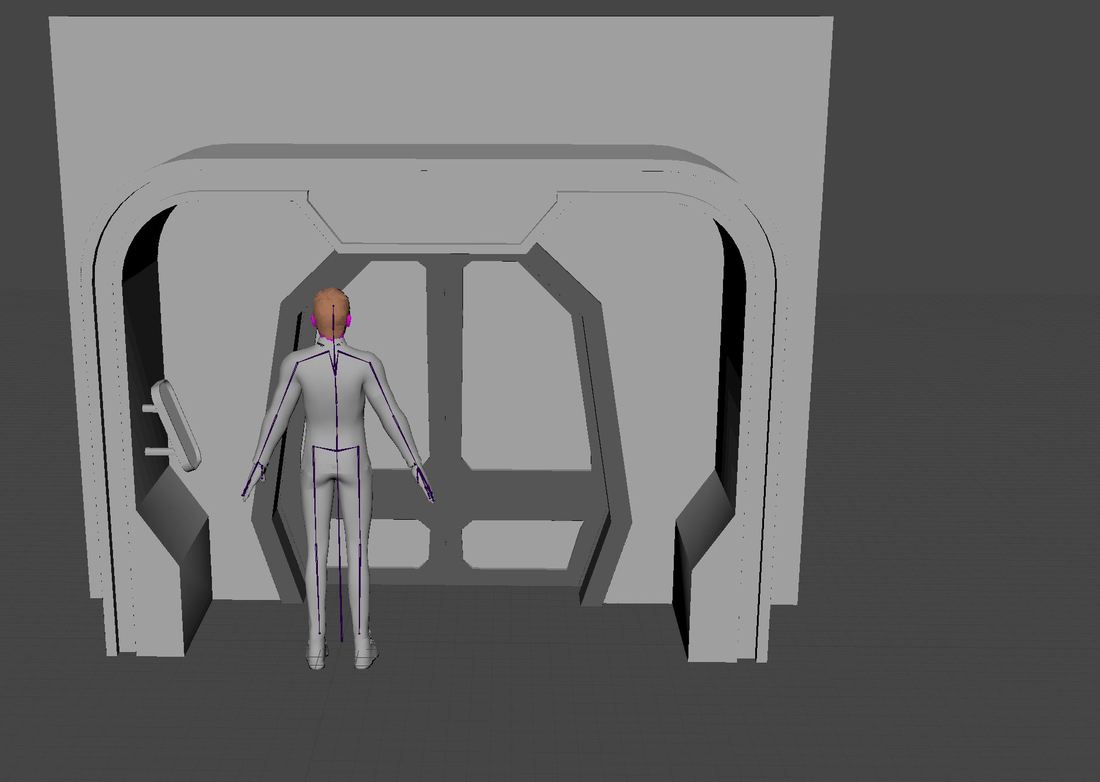

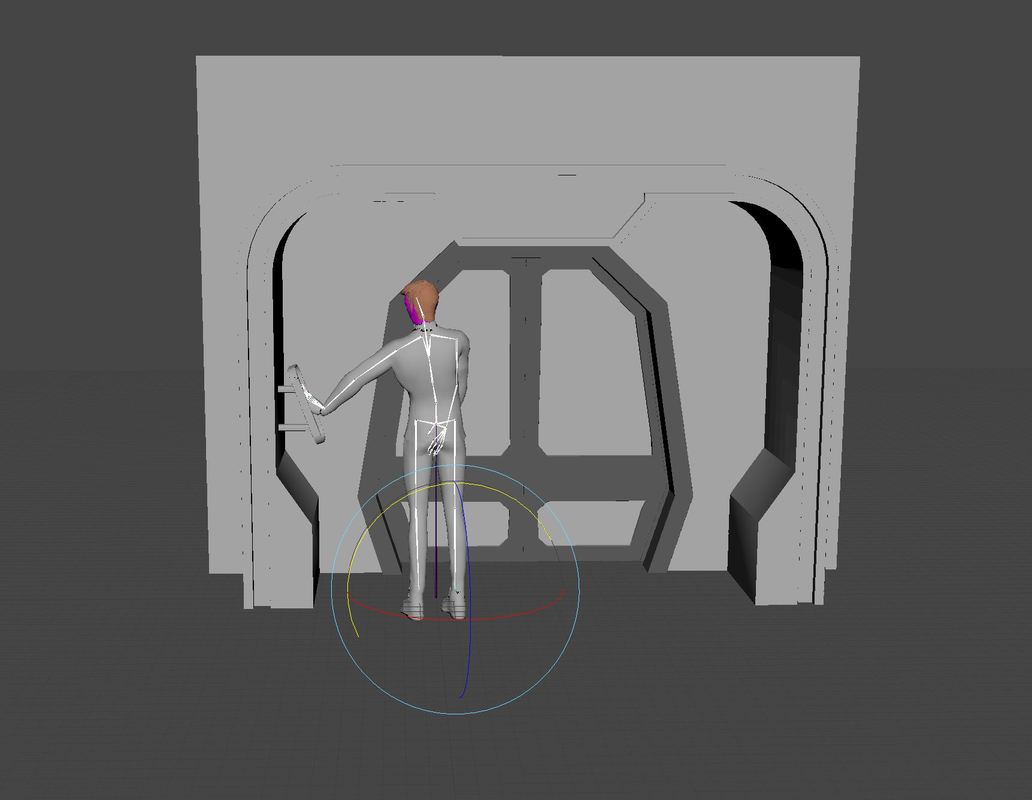

I started off by importing my rigged character into an new project and then I imported the doors and button to the project, I then lined everything up as it would be in game and positioned the player to roughly the same location it needs to active the button I then keyed the character to move its arm to press the button, adding bend and a twist and lifting the palm up to tap the button I then twisted the spine and other arm to move with the head to give the impression the character is looking at the button and pressing it. For the single door animation I imported all the files that create the door and positioned the button to the same height in the game and positioned the character when it would be active in game. I then created the animation bring that character arm up and turning the body, arm and head towards the button to look like it is looking towards it. Salute For my next animation I decided to do a salute as the game follows a type of military rank system, I thought it would be good to have a salute for the captain and other high-ranking officers. I broke the animation down into two parts I wanted to create a similar army salute so started off doing the formative stance with the character, I brought the legs closer and the arms straight against the body with the back straight, I then added the salute where I brought up the right arm to the forehead and then back down. Alien salute For my fifth animation I wanted to do some unique but staying to the military salutes but think on the lines of what about alien cultures how would they salute. I decided to create a mash up of 3 salutes, I wanted to incorporate Leonard Nimoy famous Vulcan salute and a royal salute by kneeling onto one knee. I then wanted to add a finishing touch to the salute by adding a type of war beat to the chest but more of a loving one than intimidating salute, I did this by gently patting the palm of the hand on the check back and forward. Evaluation